Why "AI Visibility" Isn't One Metric

"AI visibility" gets talked about as if it were a single metric. In practice, it isn't. McKinsey research shows 50% of consumers now use AI-powered search, with 44% saying it's their primary source for buying decisions. When teams ask whether their brand is visible in AI-generated answers, they're usually collapsing several different things into one idea. That's where most confusion, and bad decisions, start.

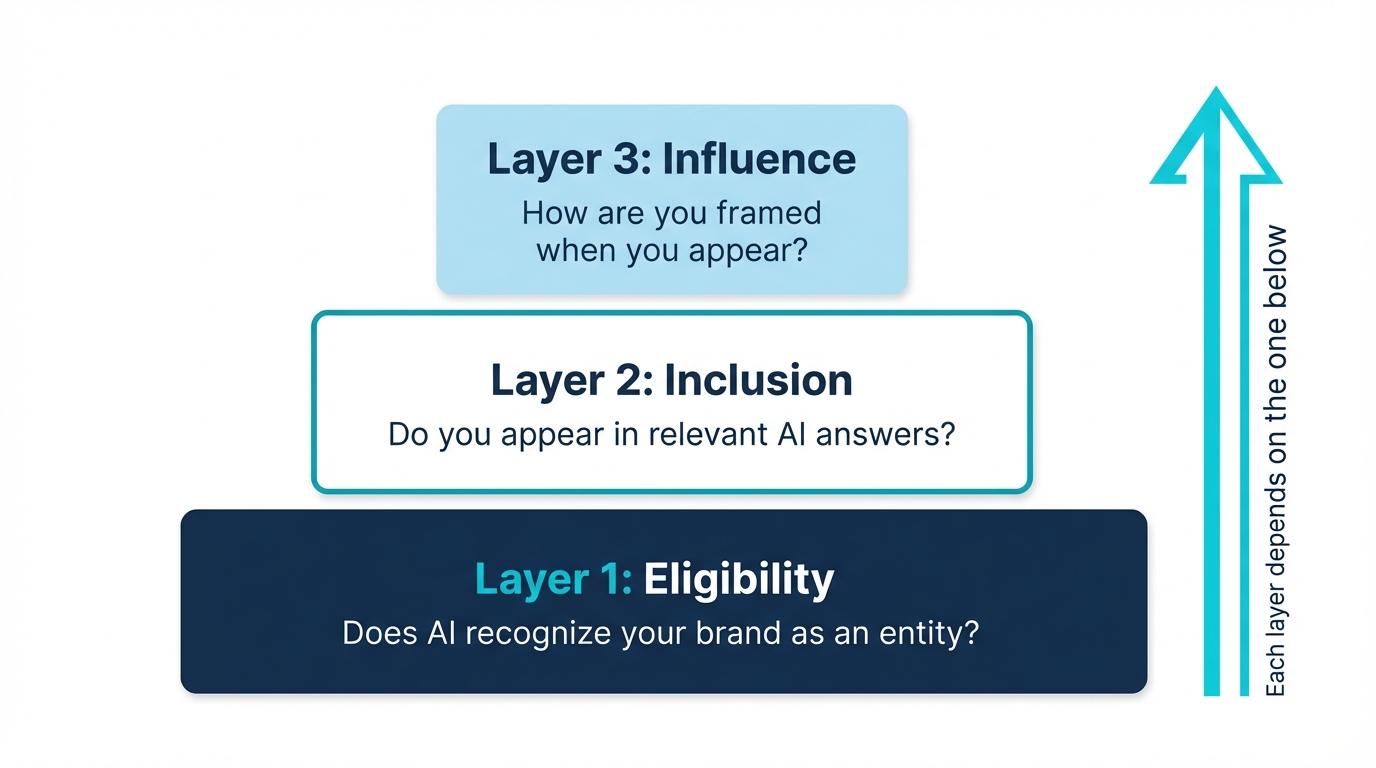

Here's the mental model I use when thinking about AI visibility.

1. Eligibility: does the model recognize you at all?

This is the foundation.

Eligibility answers a very basic question:

-

Does the model recognize your brand as a distinct entity?

-

Can it confidently resolve who you are when asked directly?

-

Does your name clearly refer to you, or something else?

If eligibility is weak:

-

Mentions are inconsistent

-

Visibility fluctuates run to run

-

Brands appear once and then disappear

-

Similarly named entities get mixed in

This is where brand recognition and disambiguation live.

If the model isn't confident about who you are, everything downstream becomes unreliable.

Eligibility failures are the hardest to diagnose because they look like randomness. Your brand appears in one response, disappears in the next, and the inconsistency makes it impossible to tell whether your optimization efforts are working.

There are common patterns behind eligibility issues. Brand names that collide with common English words (Copper, Honey, Sage) face entity disambiguation problems. The model genuinely does not know whether you mean the CRM or the metal. Brands that launched after the model's training cutoff face a knowledge gap that no amount of on-site optimization can close directly. And brands with thin web presence outside their own domain lack the cross-referential signals models need to build entity confidence.

Testing eligibility is straightforward: ask ChatGPT, Claude, and Gemini "What is [your brand]?" in a fresh session. If the answer is wrong, vague, or describes a different entity, you have an eligibility problem. If it's correct and detailed, you've cleared the first layer.

At a technical level, eligibility depends on your Knowledge Graph confidence score. Google and Bing both maintain entity databases that map who you are, what you do, and how you relate to other entities. Brands with a strong Knowledge Graph entry (Wikidata, consistent schema, cross-referenced profiles) get resolved correctly every time. Brands without one get confused with other entities, described inaccurately, or ignored entirely. You can check your own score using Google's Knowledge Graph Search API.

Common eligibility fixes: Start with your Knowledge Graph presence. Create a Wikidata entry, add Organization schema with sameAs cross-references, and claim your Google Business Profile and Bing Places listing. These establish the entity foundation that makes all other fixes more effective. Then claim your Wikipedia page if you qualify. Ensure your brand is described consistently across at least 30-50 authoritative third-party domains. Implement Organization schema markup on your homepage. Publish a comprehensive "What is [your brand]" page that models can reference as a definitive source.

2. Inclusion: do you surface in relevant answers?

Inclusion comes next.

It answers questions like:

-

Are you mentioned when users ask category-level or problem-level questions?

-

Do you appear when the model is comparing options?

-

Are you part of the consideration set at all?

You can be eligible but not included.

When that happens, it's usually because:

-

Topical associations are weak

-

Coverage across relevant questions is thin

-

The brand isn't present in sources the model trusts

Most AEO tactics people talk about are trying to move this layer.

Inclusion is where most brands focus their AEO efforts, but it's also where the most common mistakes happen. Teams publish content targeting AI-friendly queries and assume that ranking in Google means appearing in AI answers. It does not. BrightEdge data shows that ChatGPT and Google AI Overviews disagree on brand recommendations 62% of the time. You can rank #1 on Google for a query and still be absent from ChatGPT's answer.

Inclusion depends on topical breadth, not just individual page quality. A brand that has one great page about a topic is less likely to be included than a brand with consistent coverage across multiple related subtopics. This is why topic clusters matter for AI visibility: they signal to models that you have comprehensive expertise in a domain, not just a single piece of content.

Testing inclusion is a volume exercise. Run 15-20 category-level queries across ChatGPT, Perplexity, and Gemini. Queries like "best [category] tools," "how to solve [problem]," and "[category] for [use case]." Track how often your brand appears vs. your top 3 competitors. If competitors show up consistently and you don't, you have an inclusion gap.

Common inclusion fixes: Build topic clusters around your core categories with pillar pages and supporting posts. Target category and problem queries, not just branded terms. Ensure your content directly answers the questions users ask AI models, using clear structure and factual claims that models can extract and synthesize.

3. Influence: how are you framed when you appear?

This layer is closely tied to AI sentiment.

Influence determines outcomes.

It answers:

-

Are you mentioned first or buried in a list?

-

Are you framed positively, neutrally, or cautiously?

-

Are you described as a leader, an example, or a fallback?

Two brands can both be included in an answer, but only one meaningfully benefits.

This is where lead quality, conversion likelihood, and commercial impact are actually decided.

Influence is where business impact actually happens, but it's the layer most teams never measure. Two brands can both appear in the same AI answer, but one gets described as "a popular option" while the other is "widely regarded as the leader." The framing determines which brand the user clicks, researches, or buys.

Influence is driven by sentiment signals across the web. Third-party reviews, analyst reports, press coverage, and customer testimonials all shape how models characterize your brand. If your review profile is thin or mixed, models hedge their language. If you have consistent, positive, authoritative coverage from trusted sources, models frame you more confidently.

Testing influence means recording not just whether you appear, but the exact language used to describe you. Is it neutral, positive, or enthusiastic? Are you mentioned first or last in a list? Are you described with qualifiers like "sometimes recommended" or definitive language like "top choice"? These distinctions directly impact conversion.

Common influence fixes: Invest in review generation on G2, Capterra, and Trustpilot. Pursue analyst coverage and industry press. Ensure your messaging is consistent across all touchpoints so models receive reinforcing signals from multiple sources. Publish original research and data that positions your brand as a category authority.

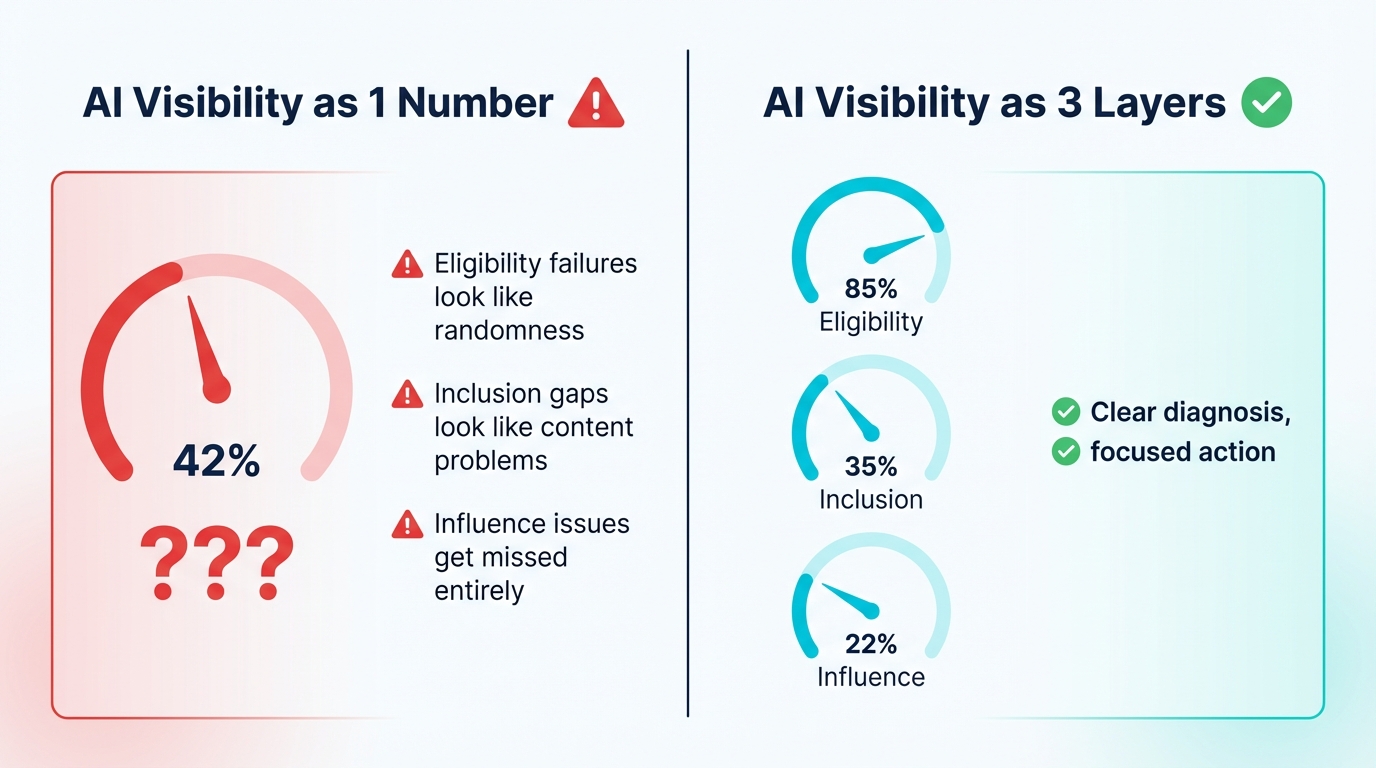

4. Why "AI visibility" as a single metric breaks down

For a practical breakdown of what to actually measure, see AI Visibility Metrics: What to Measure.

When all of this gets collapsed into one number:

-

Eligibility failures look like randomness

-

Inclusion gaps look like content problems

-

Influence issues get missed entirely

Teams end up:

-

Fixing the wrong things

-

Misreading progress

-

Over-optimizing surface-level tactics

The signal isn't wrong. It's just being flattened.

5. Why this explains inconsistent AEO results

This breakdown explains why:

-

Some brands get mentioned but don't convert

-

Some see volatility they can't explain

-

Some improve content without improving visibility

-

Some tools report progress while reality feels unstable

Different layers are moving, but they're not being separated.

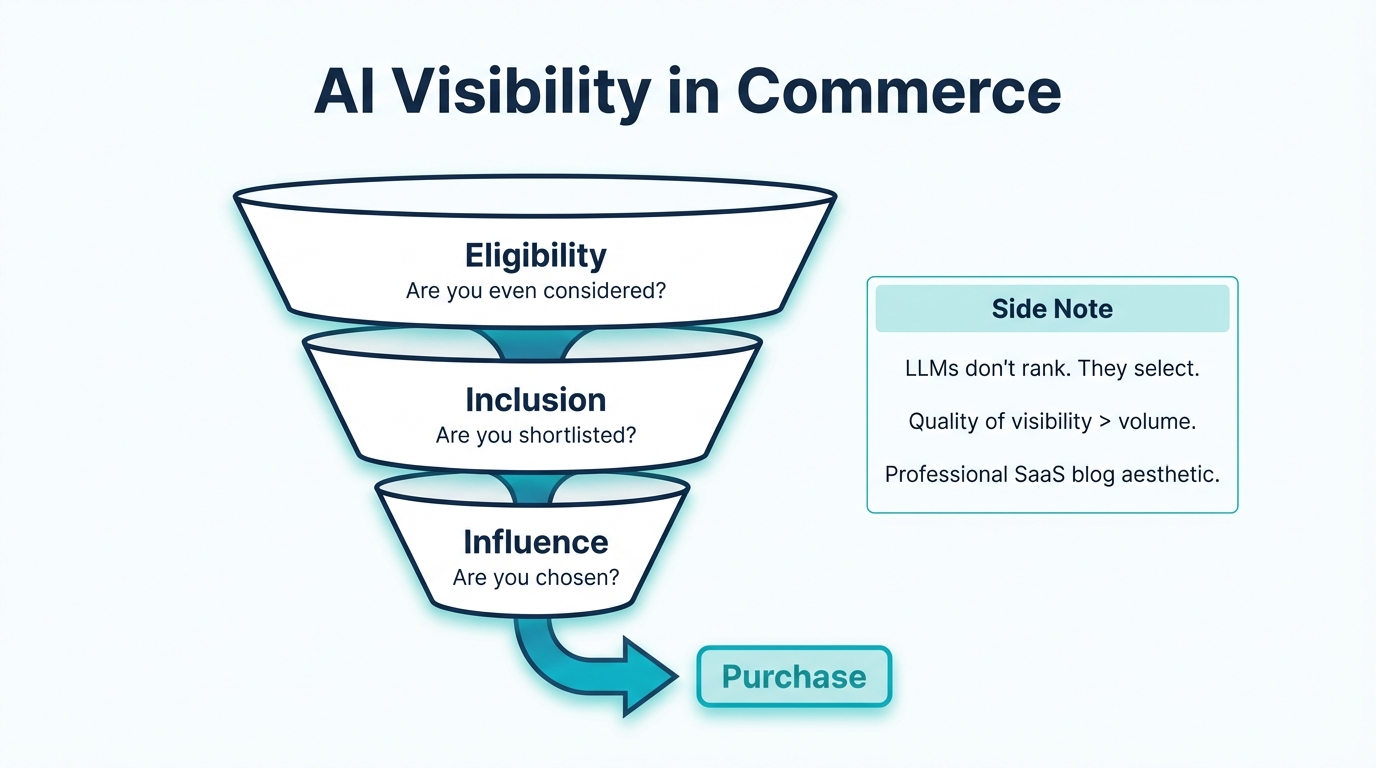

6. Why this matters even more for commerce prompts

In commercial queries:

-

Eligibility determines whether you're even considered

-

Inclusion determines whether you're shortlisted

-

Influence determines whether you're chosen

LLMs don't rank results the way search engines (see Google Search Central) do. They select.

That makes the quality of visibility far more important than raw volume.

7. What most tools still miss

Most tools today focus on:

-

Mentions

-

Citations

-

Appearances

Very few ask:

-

Whether the model actually recognized the brand

-

Why the brand was included

-

How it was framed

-

Whether the signal is stable across models

That gap is where a lot of false confidence comes from. We ran an experiment testing 5 brands across ChatGPT ([OpenAI](https://openai.com/chatgpt)), Claude, and Gemini that illustrates this clearly.

8. The takeaway

Visibility matters. And once you understand these layers, you can take action to improve them. But only when you know which part of visibility you're improving.

Eligibility, inclusion, and influence move independently. Treating them as one metric is where most teams get lost.

This breakdown is the mental model we use internally while building friction.

In the next post, I'll go one step further and talk about experimentation, and why AEO without testing quickly turns into guesswork.

If you're evaluating options, we've also published a comparison of the top 10 AI visibility tools available today.

For platform-specific optimization, see our guides on ChatGPT, Perplexity, and Google AI Overviews.

How to Assess Your AI Visibility Across All Three Layers

A complete AI visibility assessment covers all three layers independently. Here is a practical framework you can run in under two hours:

Step 1 -- Eligibility audit: Ask each major AI platform directly about your brand in fresh sessions. Can it correctly identify who you are, what you do, and what category you belong to? Score each platform: Clear and correct / Partially correct / Wrong or confused.

Step 2 -- Inclusion audit: Run 20+ category and problem queries across platforms. For each query, record whether your brand appears and which competitors appear alongside you. Calculate your mention rate: Consistent (50%+ of queries) / Spotty (20-50%) / Absent (<20%).

Step 3 -- Influence audit: For queries where you do appear, record the framing language verbatim. Categorize it: Strong positive (leader, top choice, highly recommended) / Neutral (an option, available, popular) / Weak (some users find, budget alternative, lesser known).

The combination tells you exactly where to focus. Eligibility failures need entity and knowledge base work, and this is foundational. Everything else is premature until it's resolved. Inclusion gaps need content and topical authority. Influence issues need PR, reviews, and third-party validation.

Related Guides: Explore AI Visibility in Depth

This post defines the framework. For actionable guidance on each aspect:

Building from scratch: If you're starting from zero, Building AI Visibility from Scratch: A Beginner's Roadmap covers the step-by-step process from eligibility through influence.

Improving existing visibility: For brands already appearing but underperforming, How to Improve Your AI Visibility: A Step-by-Step Guide focuses on moving from inclusion to influence.

Startup-specific strategies: Competing against established brands with limited resources requires different tactics. See AI Visibility for Startups: How to Compete with Bigger Brands in AI Search.

The macro picture: For context on why AI visibility matters now, The AI Visibility Gap: Why 90% of Brands Are Invisible to AI in 2026 covers the market forces at play.

SEO vs. AI visibility: If you're wondering why strong SEO isn't translating to AI presence, Why SEO Alone Won't Give You AI Visibility explains the disconnect.