Search engines are losing their grip on your audience. Gartner predicts a 25% drop in traditional search volume by 2026, and when Google does show results, AI Overviews are eating the clicks. Pew Research found users click only 8% of the time when an AI summary appears, vs 15% without. The old game was ranking. The new game is getting cited.

Being cited by AI is the new click. When ChatGPT, Perplexity, or Gemini answers a question and names your brand as the source, that carries more weight than a blue link on page one. The user doesn't need to click through to trust you. The AI already vouched for you.

Getting cited by AI means structuring your content so that large language models like ChatGPT, Perplexity, and Gemini reference it as a source when answering user questions. This requires authoritative, well-structured content with clear claims, named entities, and factual data that AI models can confidently attribute.

This guide breaks down how AI models choose what to cite. It covers what makes content citable at both the strategic and sentence level, plus what you can do today to increase your citation rate across every major AI platform.

Last updated April 2026.

Why Citation Is the New Visibility Metric

The shift from clicks to citations isn't gradual. It's a cliff. Users are asking AI assistants their questions directly, and those assistants are synthesizing answers from a handful of sources. If you aren't one of those sources, you don't exist in the conversation.

Traditional SEO metrics like impressions and click-through rate are becoming unreliable indicators of brand visibility. A brand can rank #1 for a keyword and still lose traffic because an AI Overview absorbed the answer before anyone scrolled down.

Citation is now the atomic unit of AI visibility. Each time an AI model names your content as a source, it signals to the user that your brand is an authority. Unlike a search result that competes with nine others on the page, an AI citation often stands alone or shares space with only two or three other sources.

How AI Models Decide What to Cite

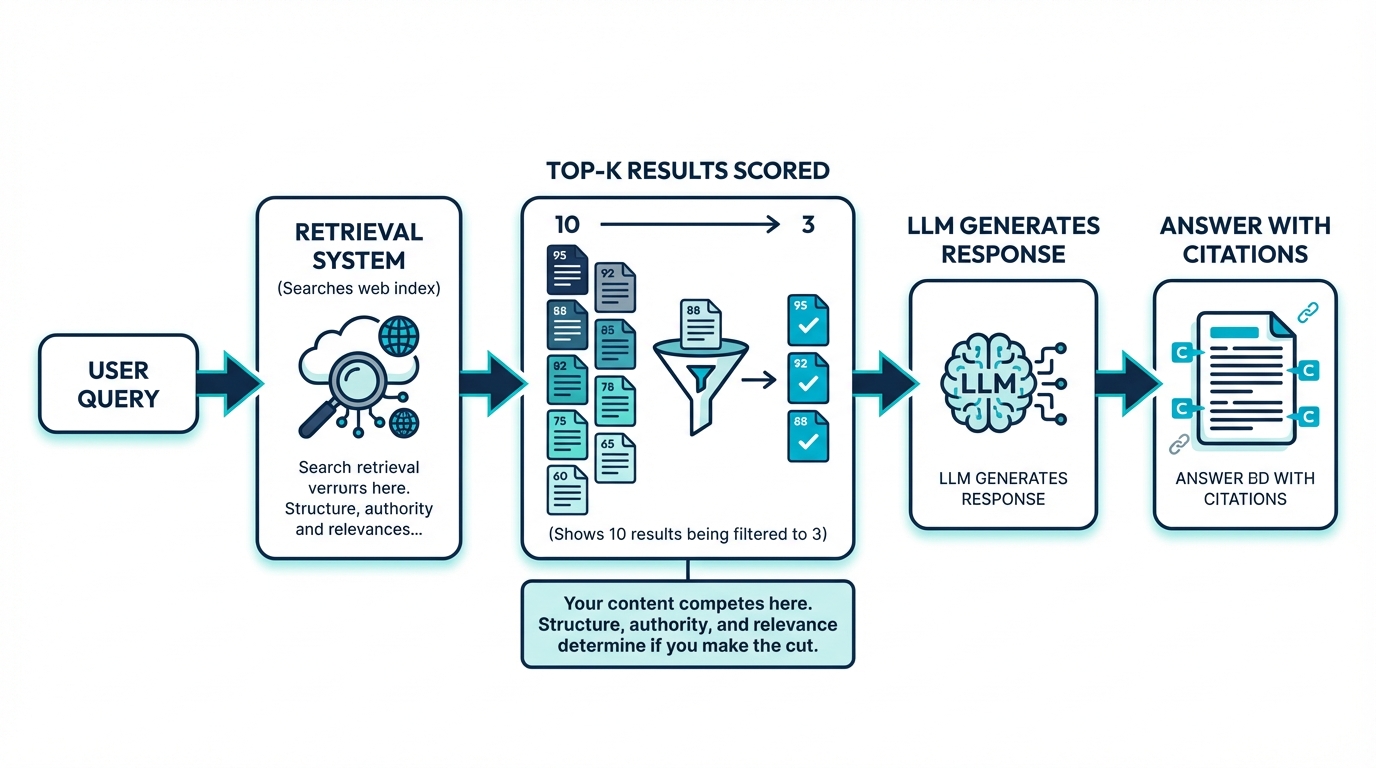

Most AI systems that cite sources use retrieval-augmented generation (RAG). Before an LLM generates a response, a retrieval layer searches indexed content, scores individual passages for relevance, and passes only the top-K results to the language model. Research presented at NeurIPS 2024 on passage-level ranking confirms that this filtering step is where most content gets eliminated.

Your page doesn't get passed to the model in full. Individual paragraphs and sections compete against passages from every other indexed source. A paragraph that buries its point behind three sentences of setup will score lower than a competing passage that leads with a direct answer.

At friction AI, we track AI citation behavior across thousands of brand queries each month. The consistent pattern we see: passage structure determines citation rate more reliably than domain authority or content length. Sites we'd expect to dominate (high DR, established brands) routinely get passed over for leaner, better-structured sources.

Authority Signals

AI retrieval systems lean on many of the same authority signals that search engines use: domain authority, backlink profiles, and how frequently other sources reference your content. Research on citation bias in LLMs shows that models disproportionately surface content that is already widely cited across the web.

Content Structure

Structure determines whether the retrieval system can extract a clean, quotable passage from your page. Content that buries its key claims deep in long paragraphs gets passed over in favor of content with clear headings, definitions near the top, and discrete, self-contained sections.

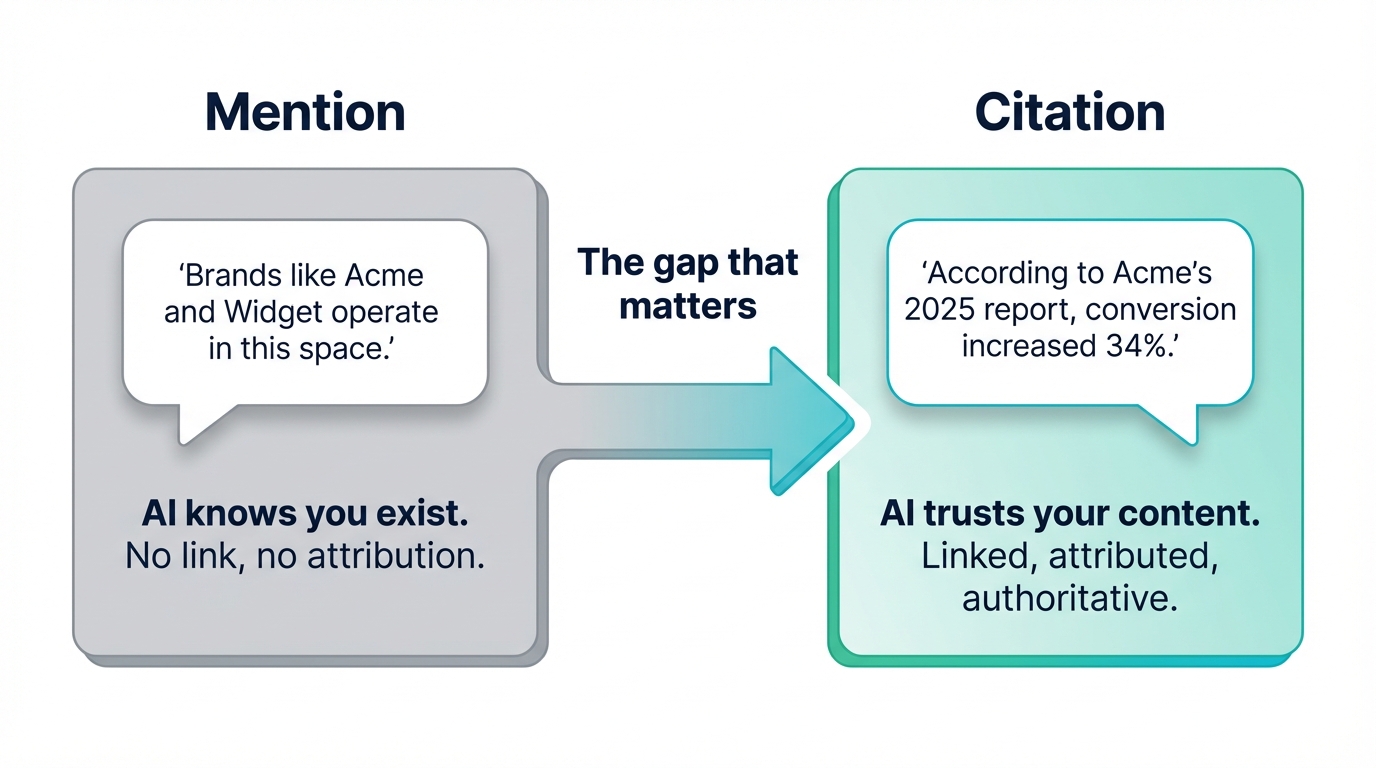

Mentioned vs. Cited: A Critical Difference

There's a meaningful gap between an AI mentioning your brand and an AI citing your content as a source. A mention might look like: "Brands like Acme and Widget Co. operate in this space." A citation looks like: "According to Acme's 2025 industry report, conversion rates increased 34%."

Mentions signal that the AI knows your brand exists. Citations signal that the AI trusts your content enough to ground its answer in it. Only citations drive the kind of authority that compounds over time.

To move from mentioned to cited, your content needs to contain original data, clear definitions, or unique analysis that the model can attribute to you specifically.

What Makes Content Citable

A study of 8,000 AI citations reveals clear patterns.

Put Definitions in the First Third

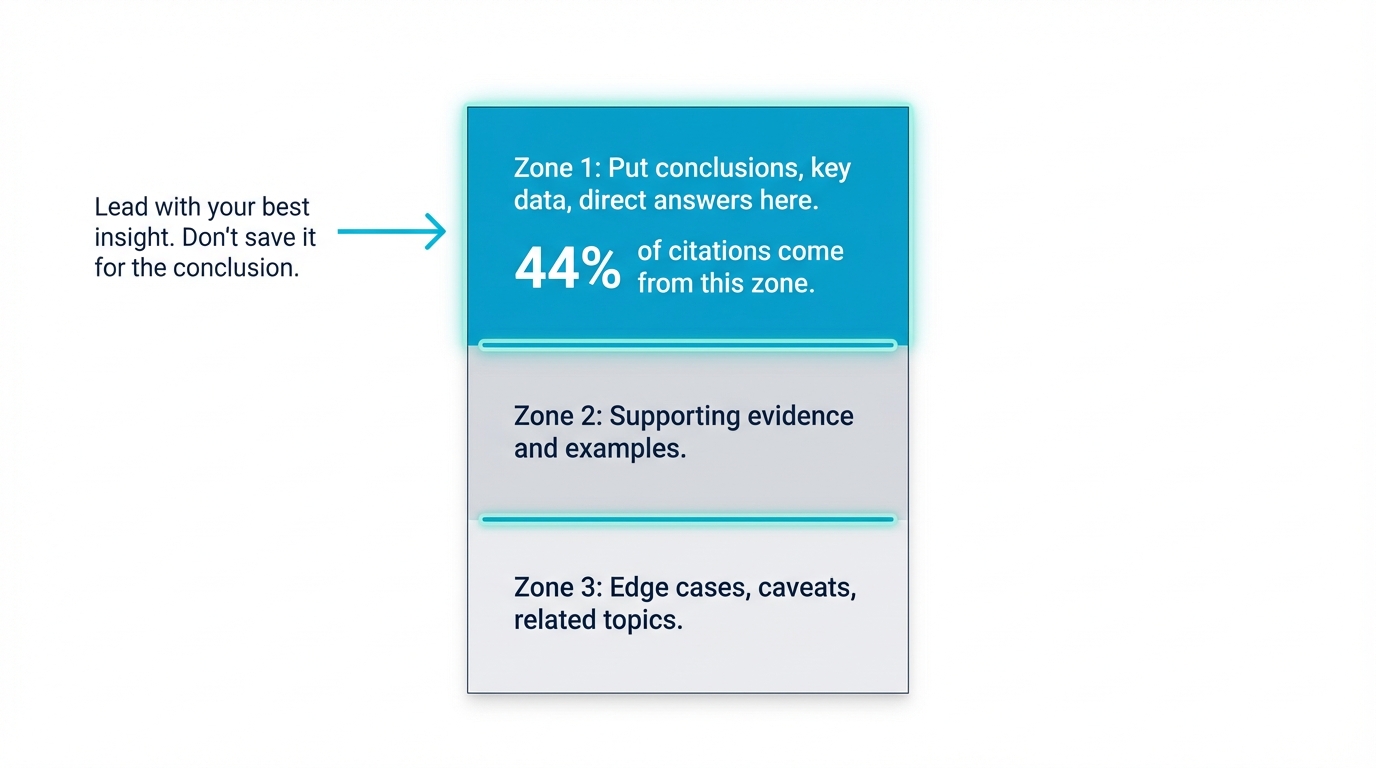

Research on ChatGPT's citation behavior found that 44% of citations pull from the first third of the content. AI retrieval systems weight early content heavily, so your clearest, most citable statements need to appear near the top.

Don't save your best insight for the conclusion. Lead with it. Define terms, state findings, and present your core argument within the first few paragraphs.

Increase Entity Density

Entity density refers to the concentration of specific, named things in your content: people, products, companies, metrics, dates, and locations. Content that AI systems cite tends to have a proper noun density around 20.6%, compared to the 5-8% typical of generic marketing copy.

Compare "sales went up last year" with "Acme's North American SaaS revenue grew 23% in Q3 2025." The second version is far more citable because it contains specific entities the model can match and attribute.

Use Comparison Tables and Structured Data

Tables, bulleted comparisons, and structured lists are citation magnets. AI models can extract discrete facts from structured content far more reliably than from flowing prose.

Maintain Clean Heading Hierarchy

Your heading structure acts as a table of contents for retrieval systems. Each H2 should introduce a distinct subtopic. Each H3 should break that subtopic into specific, answerable questions.

Writing Rules for Citable Content

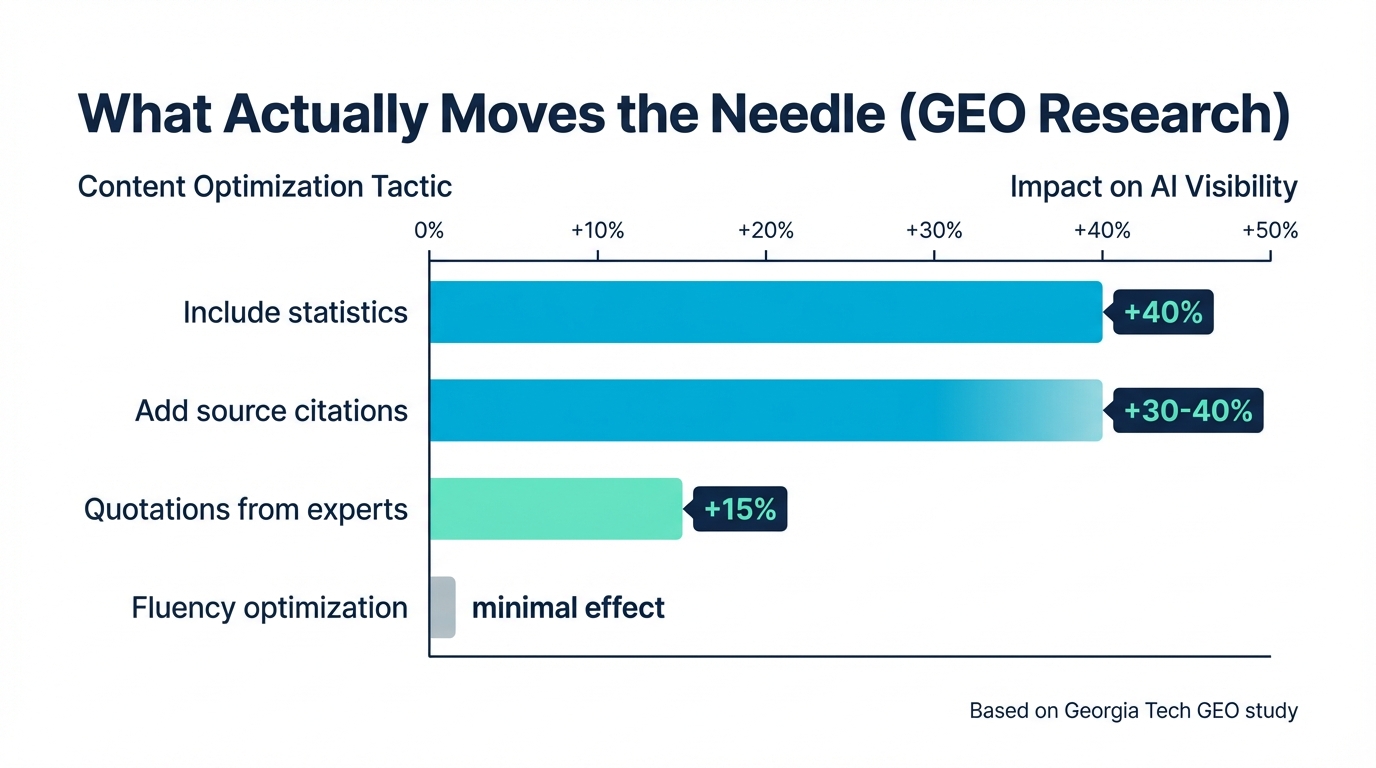

Researchers at Georgia Tech published a study on Generative Engine Optimization that quantified what makes content visible to AI systems. The results were striking: including relevant statistics boosted content visibility by 40%, and adding source citations increased visibility by 30-40% (GEO: Generative Engine Optimization, arxiv/KDD).

For comparison, adding quotations from authoritative sources improved visibility by about 15%. Fluency optimization had minimal measurable effect.

Lead With the Answer

Every section should open with its core claim or finding. Answer-first content structures align with how both AI systems and impatient readers process information. Don't build to your conclusion. State it, then support it.

Cite Specific Numbers

"Revenue grew 34% year-over-year" beats "revenue grew significantly." Name your sources inline. Don't save attribution for a footnote.

An analysis of 304,805 URLs by RankScience found that formatting and structure often outperformed content rigor in determining AI citation likelihood. But when you combine clear formatting with hard data, you create passages that retrieval systems rank highest.

Define Terms Explicitly

When you introduce a concept, define it in the same sentence or the one immediately following. AI systems extract definitions as high-confidence passages. If your content is the clearest definition available for a term in your category, you become the default citation source.

Use Original Data

Proprietary research, survey results, and benchmark data give AI systems something they can't find anywhere else. If your passage could appear on any competitor's site with a name swap, it won't stand out in passage-level ranking.

Structure Your Content for Retrieval

Think of each page as having three zones. Zone one (the top third) is where you place conclusions, key data points, and direct answers. Zone two expands with supporting evidence and examples. Zone three covers edge cases, caveats, and related topics.

Positioning Guidelines

- Put your strongest claims in the first third of the page

- Use question-based headings that mirror how people query AI systems

- Keep paragraphs to 2-4 sentences. Shorter paragraphs create cleaner passage boundaries

- Bold key phrases within lists and paragraphs

The Citation Bias and How to Use It

LLMs exhibit a heightened citation bias, meaning they disproportionately cite sources that are already frequently cited elsewhere on the web. This creates a compounding loop. The more you're cited, the more you'll be cited.

Breaking into that loop requires intentional distribution:

- Publish original research that other outlets want to reference

- Contribute expert quotes to industry publications

- Create definitive guides that become the canonical source on a topic

- Build backlinks from authoritative domains in your vertical

The flip side is encouraging. Originality.AI found that 48% of AI Overview citations come from pages outside the top 100 in traditional search rankings. You don't need to rank on page one to get cited. You need content that the retrieval system finds relevant, structured, and trustworthy.

What to Stop Doing

Some common content habits actively hurt your AI citation chances.

- Generic topic coverage. If your passage could appear on any competitor's site with a name swap, it won't stand out in passage-level ranking.

- Burying answers. Introductions that take 200 words to reach the first useful claim push your best content out of the high-scoring zone.

- Inconsistent data across channels. A Nature Communications study found that 50-90% of LLM citations don't fully support the claims they're attached to. Conflicting information from the same brand makes this worse.

- Ignoring your own site. Analysis of 17.2 million AI citations by Yext found that 86% come from sources brands directly control. Your website is your primary citation source, not third-party press coverage.

SE Ranking research shows that top-10 organic results have dropped from 76% to 38% of AI citations. Traditional SEO ranking alone no longer guarantees AI visibility. The content itself has to earn its place.

Actionable Steps by Platform

ChatGPT (with Browse and Search)

ChatGPT uses Bing's index for web retrieval. Without retrieval grounding, GPT-4o hallucinates citations 78-90% of the time. That means ChatGPT relies heavily on the documents it retrieves.

- Optimize for Bing by submitting your sitemap to Bing Webmaster Tools

- Use clear, factual language that the model can quote directly

- Include structured data markup (FAQ schema, How-To schema) to improve retrieval signals

Perplexity

Perplexity cites aggressively and transparently. It retrieves multiple sources per answer and displays them prominently.

- Publish frequently so your content appears in Perplexity's crawl

- Front-load answers in your content structure

- Use specific, queryable headings that match the types of questions users ask

Google AI Overviews

AI Overviews pull from Google's own index. Traditional Google SEO still matters, but the selection criteria differ from organic ranking.

- Target informational queries where AI Overviews are most likely to appear

- Write content that directly answers the query in a concise, extractable format

- Use list and table formats for comparison and how-to queries

Gemini

Gemini draws from Google's index and knowledge graph. Structured content with strong entity signals performs best.

- Build your Google Knowledge Panel

- Claim and optimize your Google Business Profile

- Use schema markup extensively to reinforce entity relationships

How to Measure Your AI Citation Rate

You can't optimize what you don't measure. Manual spot-checking works for small-scale monitoring: search your brand and key topics across ChatGPT, Perplexity, and Google AI Overviews, and record which queries return citations to your content. But this doesn't scale.

Dedicated AI visibility tools track your citation rate across models, measure how your brand appears in AI-generated responses, and identify gaps where competitors are getting cited and you aren't. For a landscape view of the category, see our AI visibility tools landscape across dedicated AEO platforms, SEO suites, brand monitors, and DIY approaches.

What Comes Next

To go deeper on specific aspects, explore these related resources:

- What Types of Content Do AI Models Actually Cite?: A platform-by-platform breakdown of citation preferences

- AI Citation Tracking: How to Monitor AI Sources: Tools and methods for tracking your citation rate

- AI Visibility for Startups: How early-stage brands can build AI visibility without enterprise budgets

- Tracking Your Brand vs. Competitors in AI: Monitor how AI represents you relative to your competition

- How to Control What AI Says About Your Brand: Strategies for shaping your brand's AI narrative

- How to Improve Your AI Visibility: A comprehensive guide to ranking in AI-powered search

- Third-Party Sources AI Models Trust: Which external sources carry the most weight

- How to Train LLMs to Understand Your Brand: Building the structured data foundation

Frequently Asked Questions

What types of content do AI models cite most?

AI models most frequently cite content that contains original data, named statistics, expert quotes, step-by-step processes, and clear definitions. Structured formats like tables, numbered lists, and comparison charts increase citation likelihood because they give AI models discrete, attributable claims to reference in their answers.

How long does it take for AI to start citing your content?

AI citation timelines vary by platform. Google AI Overviews can surface new content within days of indexing. ChatGPT and Claude update their training data periodically, so new citations may take weeks to months. Perplexity indexes web content in near real-time, making it the fastest platform for new citations.

Does content length affect AI citations?

Content length has diminishing returns. Citable passages rarely exceed 50-150 words, so a 3,000-word article cites no more reliably than a 1,500-word article if both contain well-structured, data-dense passages. Focus on passage-level density, not total word count. Long-form content helps for topic authority, but it doesn't directly improve per-passage citation rates.

Do AI citations pass link equity?

Not in the traditional SEO sense. AI citations are synthesized references in AI-generated answers, not crawled hyperlinks. Google's ranking algorithms don't treat a Perplexity citation as a backlink. That said, AI citations drive referral traffic (especially from Perplexity), which compounds SEO signals through engaged user sessions, lower bounce rates, and rising brand-search volume.

Which AI platform cites sources most transparently?

Perplexity cites every answer with numbered inline source links. Google AI Overviews show source panels but omit passage-level attribution. ChatGPT cites when using web search mode, not default mode. Claude cites when web search is enabled. Gemini cites in Grounding mode. For measuring citation rate, Perplexity offers the clearest signal because every citation is trackable via referral traffic.

Does domain authority matter for AI citations?

Yes, but less than for traditional SEO. AI retrieval systems weigh domain authority alongside passage-level factors. A high-authority domain with poorly structured passages often loses to a lower-authority domain with clear, data-rich content. Authority sets eligibility for the retrieval pool; passage structure wins the citation within it.