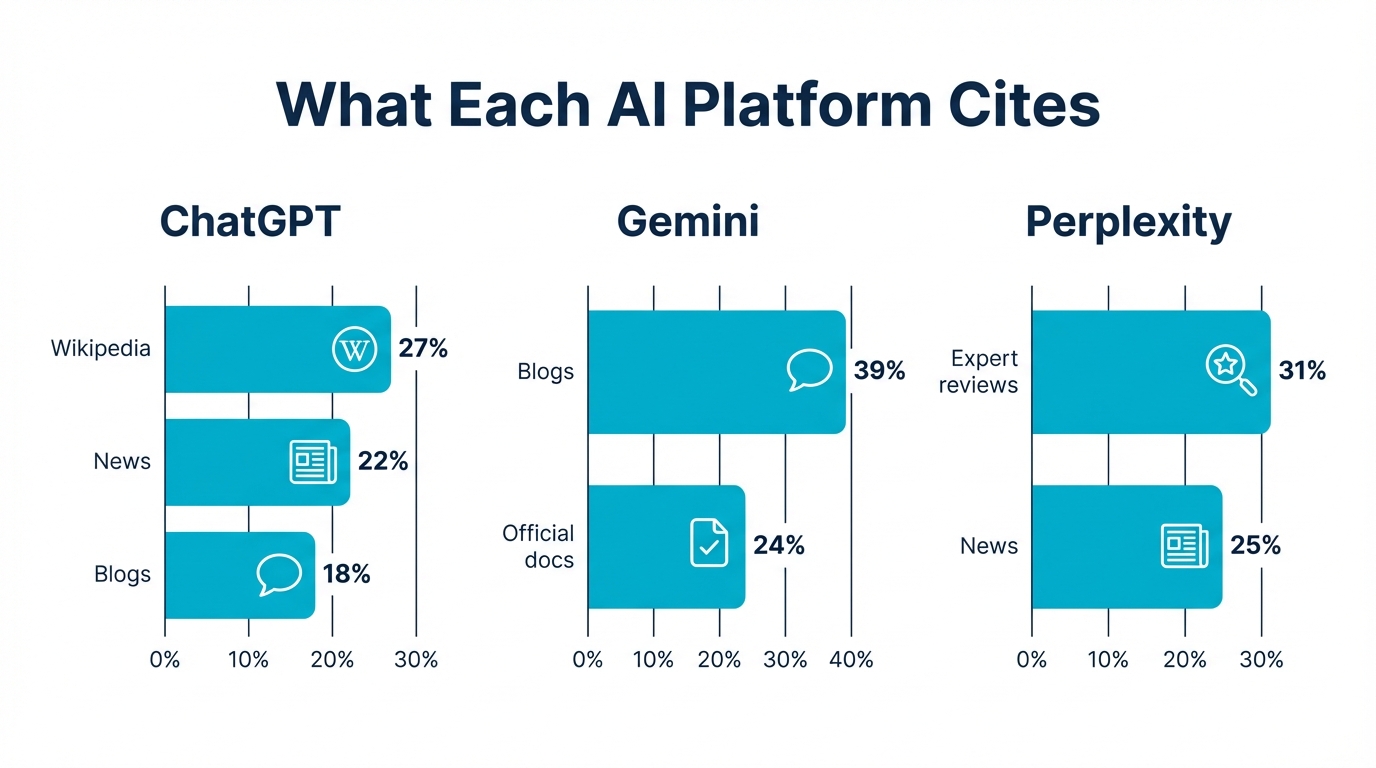

ChatGPT cites Wikipedia in 27% of responses. Gemini pulls 39% of its sources from blogs. Perplexity favors editorial reviews and expert analysis. Each AI platform has distinct citation preferences, and what earns you a citation on one may do nothing on another.

A study of 41 million search results found only 12% overlap between ChatGPT citations and Google's top organic results. The rules are different for each platform. This guide breaks down the citation preferences of every major AI model, which content formats perform best on each, and how to tailor your approach.

What Each AI Platform Prefers to Cite

Not all AI models pull from the same well. An analysis of over 8,000 AI citations revealed sharp differences in source preference across major platforms:

- ChatGPT cites Wikipedia in roughly 27% of responses. It favors structured, encyclopedic content with clear factual claims. Official documentation and well-known reference sites also appear frequently.

- Gemini leans toward blog content, with blogs making up about 39% of its cited sources. Long-form posts that explain concepts thoroughly perform well here.

- Perplexity gravitates toward editorial content, expert reviews, and journalism. It tends to surface sources that carry topical authority and provide original analysis.

The takeaway: a single content strategy won't cover all three. You need to think about which platforms matter most for your audience and tailor your approach accordingly. A Wikipedia-style factual page might earn ChatGPT citations while doing nothing for Perplexity, where an in-depth expert review would land instead.

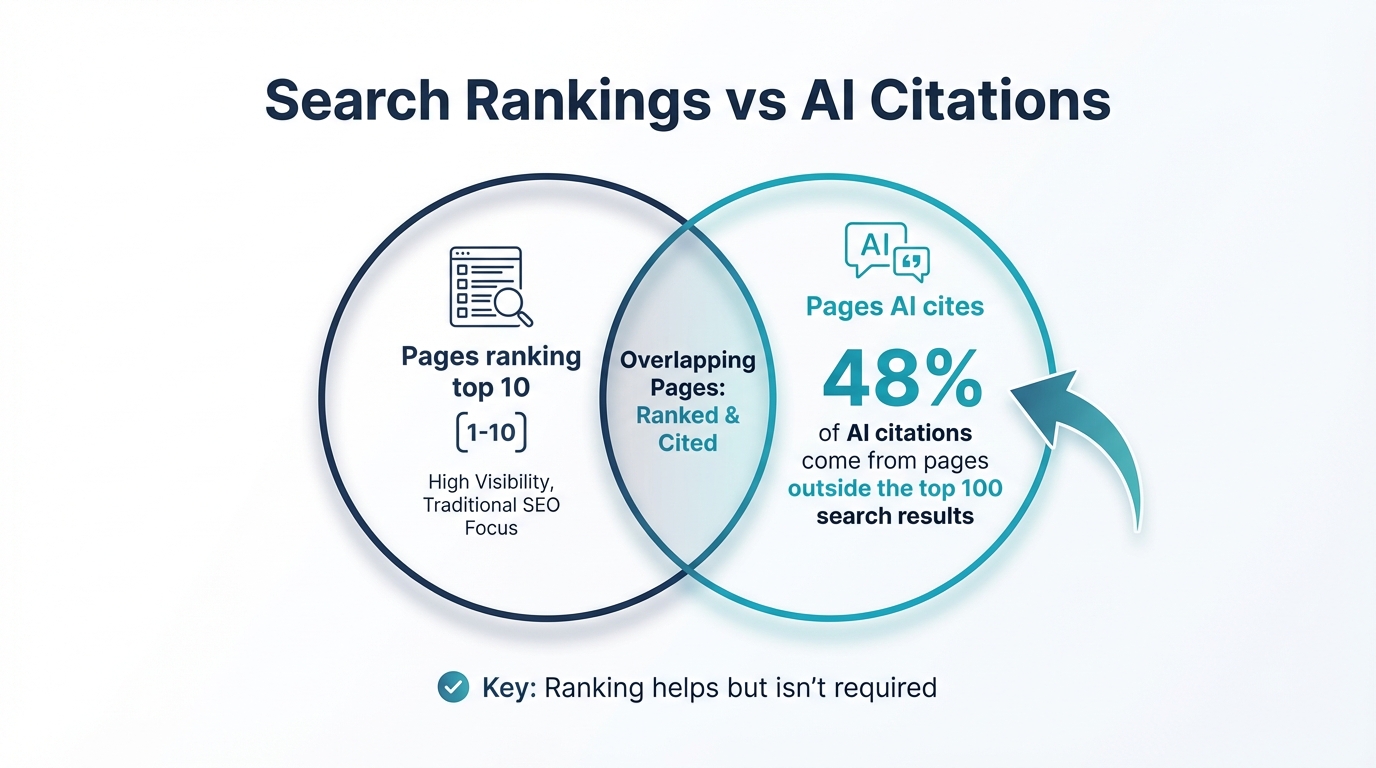

Why Traditional Search Rankings Don't Predict AI Citations

If your SEO strategy is built around climbing Google's organic results, don't assume those rankings carry over into AI search. The overlap is thin.

That 12% overlap figure between ChatGPT and Google results tells a striking story: nearly 9 out of 10 sources ChatGPT cites don't appear in Google's top results for the same query. Backlink profiles, one of the strongest traditional ranking signals, explain less than 3% of the variation in AI citations.

What does predict citation? Content structure, topical depth, and how directly a piece answers the question being asked. AI models parse content differently than search engine crawlers. They're looking for passages that can be extracted and presented as coherent answers, not pages optimized around keyword density or link equity.

This doesn't mean traditional SEO is worthless. It means treating AI citation as a separate channel with its own optimization criteria.

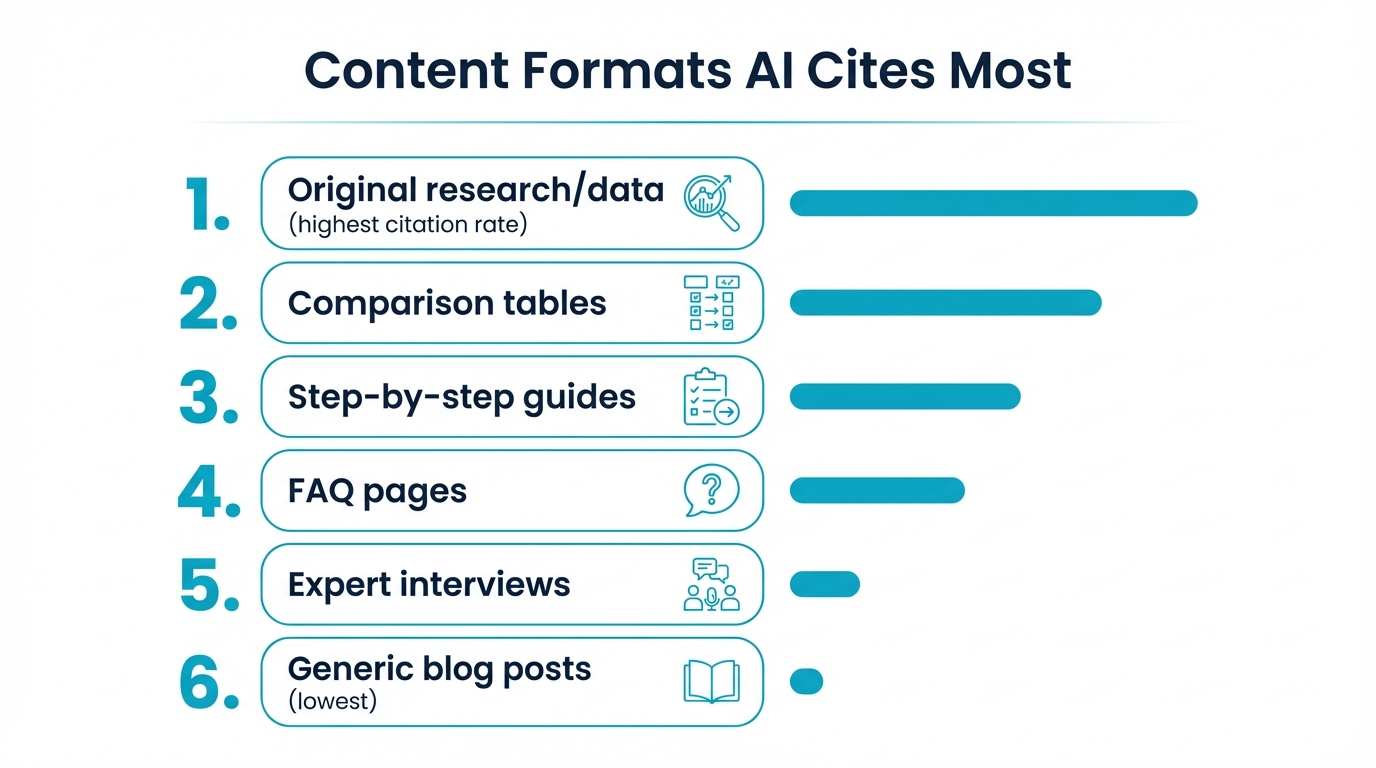

Content Formats That Get Cited Most

Structure matters more than length. Research into the content traits LLMs quote most frequently points to three format patterns that dramatically increase citation rates:

- Comparison tables are cited at 2.8x the rate of plain prose covering the same information. When a model needs to answer "which is better" or "how do these compare," a well-structured table gives it an extractable, ready-made response.

- Clear heading hierarchy (H2/H3 nesting that mirrors the questions users ask) leads to 3.2x higher citation rates. Models use headings as signals to locate the right passage for a given query.

- Answer-first formatting (leading with the conclusion, then supporting it) gets cited 3x more often than content that buries the answer deep in the text.

One nuance worth noting: LLMs underselect sentences containing numeric data by 22.6% when summarizing content. This means stats-heavy paragraphs are less likely to be pulled verbatim. If you want a specific data point cited, pair the number with a plain-language interpretation in the same sentence.

Formatting Quick Wins

- Use descriptive H2s that match common question patterns ("How much does X cost?" rather than "Pricing Information")

- Place your core answer in the first 1-2 sentences under each heading

- Build comparison tables with clear column headers whenever you're evaluating options

- Keep paragraphs short so models can extract clean passages without truncating mid-thought

The AI Overview Overlap Question

Google's AI Overviews deserve special attention because they sit at the intersection of traditional search and AI citation. Research from BrightEdge shows that the overlap between AI Overview sources and top organic results grew from 32% to 54% over 16 months.

That trend suggests Google's AI Overviews are gradually pulling more from its own top-ranked pages. But the averages hide enormous variation by industry. Healthcare and finance queries show much higher overlap (Google sticks to authoritative, YMYL-compliant sources). Niche B2B or technical queries show far less, with AI Overviews surfacing specialist content that doesn't rank in the traditional top 10.

For your strategy, this means: if you're in a regulated or high-trust industry, ranking well organically gives you a meaningful shot at AI Overview inclusion. If you're in a less regulated space, you'll need to focus on the structural and topical signals described above.

Being Cited Means Being Clicked

There's a common fear that AI citations cannibalize traffic. The data tells a more complex story.

Yes, AI Overviews have driven a 61% drop in organic click-through rates for queries where they appear. But content that gets cited within those AI responses earns 35% more organic clicks than uncited content on the same results page. Citation acts as a visibility boost: users see your brand mentioned in the AI answer and are more likely to click through to your site.

The implication is clear. You can't prevent AI from summarizing your topic. But if your content is the one being cited, you capture a disproportionate share of the clicks that remain. The brands that lose are those whose content gets summarized without attribution, while a competitor's page gets the citation link.

Building a Multi-Platform Citation Strategy

Given the platform differences, a practical approach looks like this:

- Audit your current citations. Check where your content appears (and doesn't) across ChatGPT, Gemini, Perplexity, and Google AI Overviews for your target queries.

- Match format to platform. Create structured, factual reference content for ChatGPT visibility. Develop thorough blog content for Gemini. Publish expert analysis and reviews for Perplexity.

- Restructure existing content. You don't need to start from scratch. Add comparison tables, tighten heading hierarchy, and move answers to the top of each section.

- Track citation as a metric. Traditional rank tracking won't capture AI visibility. You need tools that monitor whether your content is being referenced in AI-generated responses.

What Comes Next

This post is part of a larger series on earning AI citations. For related reading:

- How to Get Your Content Cited by AI covers the full strategic framework for citation optimization.

- How to Write Content AI Will Reference goes deeper on writing techniques and content patterns.

- How to Improve Visibility in AI Search addresses broader AI search optimization beyond citations.

- AEO Explained: Your Guide for 2026 provides the foundational context for answer engine optimization.

- How to Build AI Visibility from Zero is the complete playbook for brands building their AI presence from scratch.

For industry-specific guidance, see our guide on content types that AI models cite for SaaS.