Perplexity brand tracking is the practice of monitoring how Perplexity AI mentions, recommends, and cites your brand across its search results. Unlike ChatGPT or Gemini, Perplexity functions as a real-time search engine that cites every source it uses. That makes it the only major AI platform where your brand's visibility directly translates to trackable referral traffic.

Perplexity's Publisher Program now shares ad revenue with sites whose content gets cited in answers. Each response typically references 4 to 8 sources with inline citations, and every citation is a clickable link back to the original page. That changes the tracking equation entirely. On Perplexity, a brand mention isn't just visibility. It's a measurable touchpoint.

This guide covers what to track, how to do it manually, when to automate, and how to connect tracking data to content decisions. We'll use a fictional brand called Planway, a mid-market project management tool, to make each concept concrete.

Why Perplexity Tracking Is Different

If you're already tracking visibility across AI platforms, you might wonder why Perplexity needs its own approach. Three things make it fundamentally different from ChatGPT, Claude, or Gemini.

Real-Time Results, Not Static Training Data

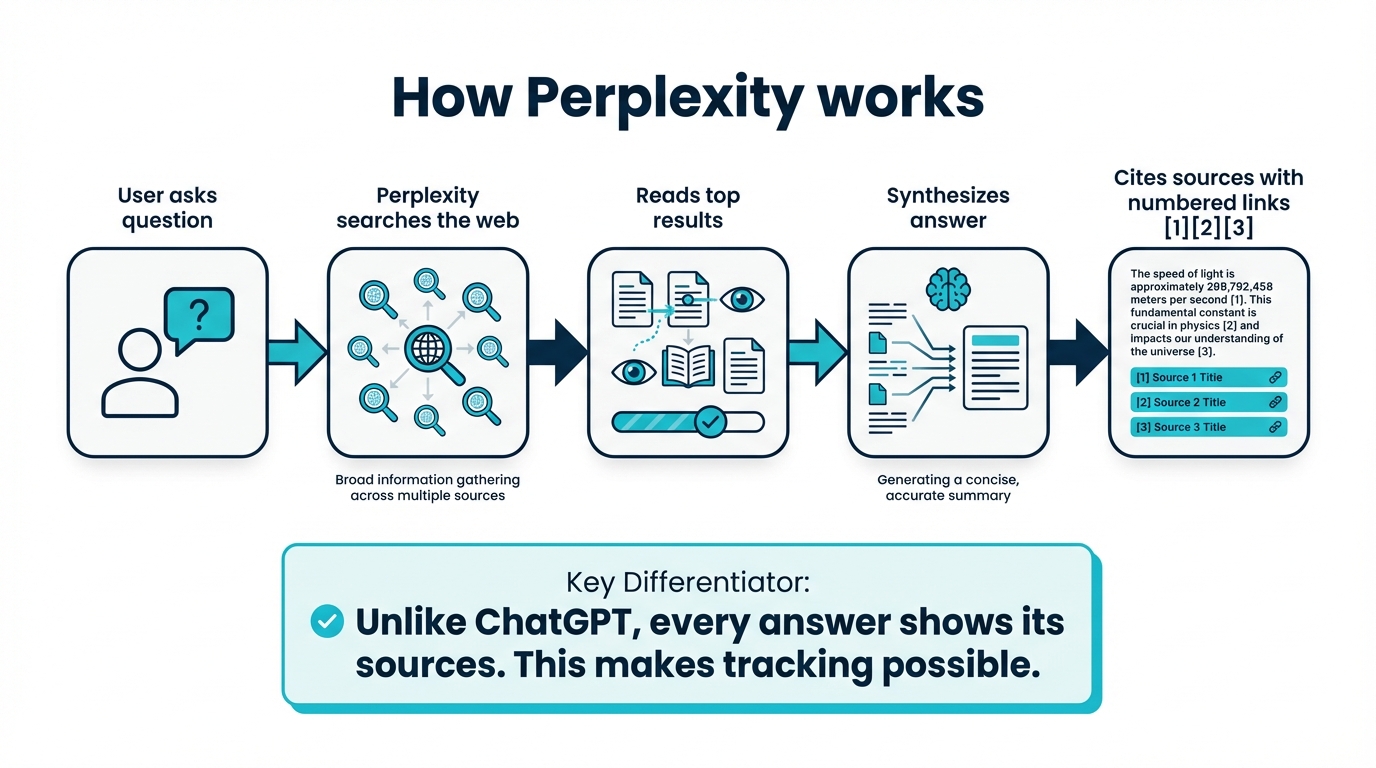

Perplexity crawls the web in real time using PerplexityBot. When someone asks a question, Perplexity runs a live search and retrieves current pages. It then generates its answer from what it finds right now, not from what was in a training dataset months ago.

This means your visibility on Perplexity can change within days of publishing or updating content. It also means competitors can displace you just as quickly. Static, quarterly audits don't work here. You need ongoing tracking to catch shifts as they happen.

Every Answer Cites Its Sources

Perplexity includes numbered inline citations in every response. Users see exactly which pages informed the answer and can click through to the source. No other major AI platform provides this level of transparency.

For tracking purposes, this is significant. You're not just measuring whether your brand was mentioned. You can see whether your specific pages were cited, how often, and alongside which competitors. That's a level of granularity ChatGPT and Gemini don't offer.

Referral Traffic You Can Measure

Because citations link directly to your site, Perplexity generates referral traffic that shows up in your analytics. You can see it in Google Analytics under Acquisition > Referral, filtered to perplexity.ai.

This makes Perplexity the only AI platform where brand visibility and website traffic are directly connected. On ChatGPT, a brand mention is invisible to your analytics. On Perplexity, it's a click.

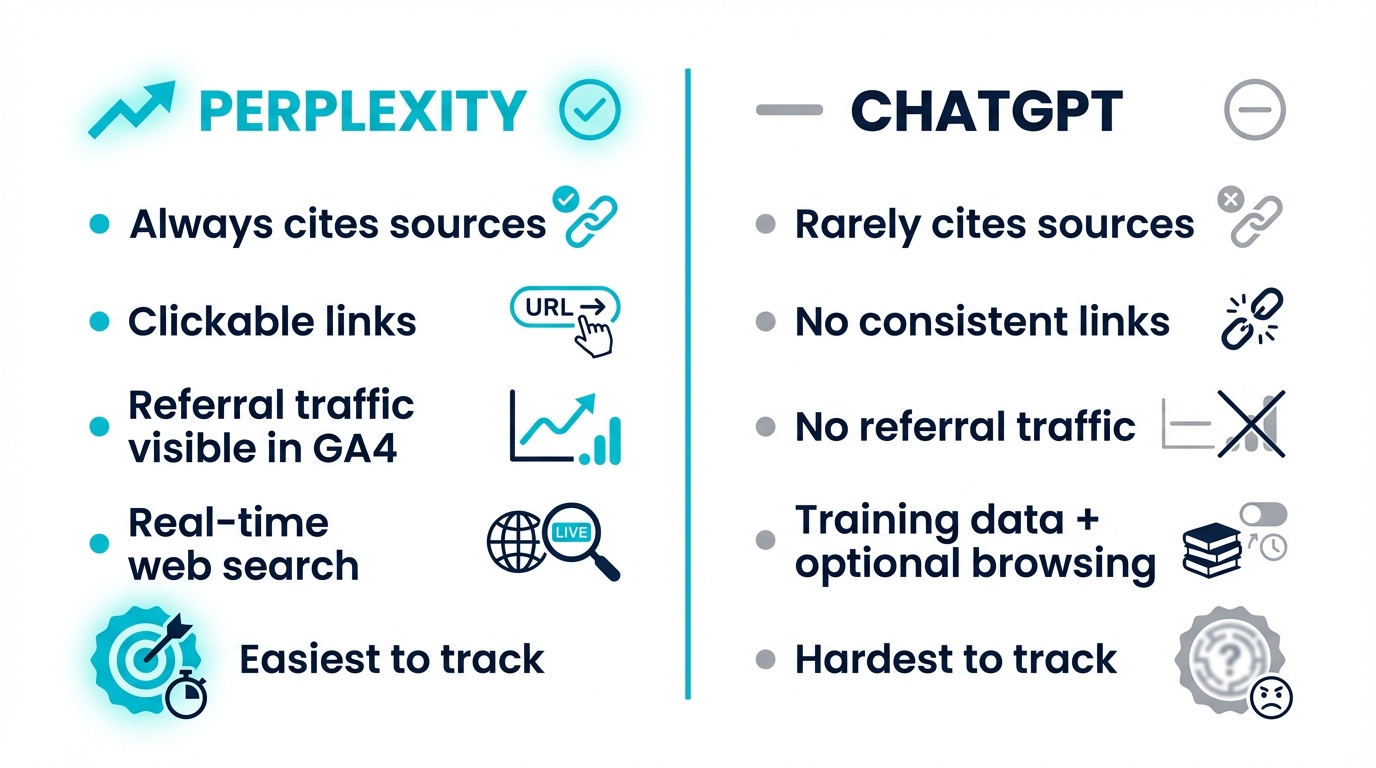

Tracking on Perplexity vs ChatGPT

Because most teams are already familiar with ChatGPT monitoring, it helps to see where Perplexity tracking is fundamentally different:

| Dimension | Perplexity | ChatGPT |

|---|---|---|

| Data source | Real-time web crawl | Static training data + occasional browsing |

| Citations | Inline, clickable, linked to source URLs | Rarely cites sources; no consistent links |

| Referral traffic | Visible in Google Analytics | No referral traffic from mentions |

| Result consistency | Changes frequently as sources update | Relatively stable within model version |

| Tracking granularity | Page-level citation tracking possible | Brand mention only, no URL-level data |

| Tracking cadence needed | Weekly minimum; daily ideal | Monthly is often sufficient |

The key takeaway: Perplexity tracking is more granular and more actionable, but it also requires higher frequency because results shift faster.

![]()

What to Track in Perplexity

To make this tangible, here's what tracking looks like for our fictional brand Planway.

Brand Mentions

The most basic signal: does Perplexity mention your brand when users ask relevant questions? Track whether your brand appears in the response text itself, not just in the citations. A brand can be cited as a source without being explicitly recommended in the answer, and vice versa.

For example, when someone asks Perplexity "What are the best project management tools for remote teams?", Planway might appear in the cited sources. Its blog post on async collaboration gets linked, but Planway itself isn't named in the response text. The answer itself recommends Asana, Monday, and ClickUp by name. That's a citation without a mention: valuable for traffic, but it means Perplexity isn't actively recommending the brand.

Citation Frequency and Sources

Which of your pages get cited, and how often? This tells you what content Perplexity finds most useful. Track the specific URLs being cited, not just domain-level mentions. A single well-structured comparison page might account for most of your Perplexity citations, while other content gets ignored entirely.

In Planway's case, their comparison page "Planway vs Asana" drives 60% of all their Perplexity citations. Their homepage and product pages are rarely cited. Understanding what types of content AI models prefer to cite helps you interpret these patterns and prioritize what to create or update.

Competitor Share of Voice

When your brand appears in a Perplexity answer, who else shows up? Share-of-voice tracking tells you how often competitors are mentioned or cited alongside you, and whether they're being positioned as alternatives, equals, or leaders.

Sentiment of Recommendations

Not all mentions are equal. Perplexity might cite your brand neutrally ("Planway offers Gantt charts and time tracking"). Or it might cite with a clear recommendation ("Planway is particularly strong for small teams that need simplicity over enterprise features"). Tracking the tone of how your brand is presented matters as much as whether it appears at all.

Key Prompts to Monitor

The prompts you track determine the quality of your data. Focus on the question types that real users are asking Perplexity:

- Brand definition queries: "What is Planway?" / "What does Planway do?"

- Category comparisons: "Best project management tools" / "Top PM platforms 2026"

- Purchase-intent questions: "Should I switch to Planway?" / "Is Planway worth it for small teams?"

- Alternative searches: "Asana alternatives" / "Monday.com vs Planway"

- Use-case queries: "Best tool for managing remote sprint teams"

These prompt categories map to different stages of the buyer journey. Tracking all five gives you a complete picture of how Perplexity positions your brand from awareness through to purchase decision. You'll reference these categories again when setting up your tracking workflow below.

Manual Tracking (and Why It Breaks Down)

The simplest way to start is running prompts yourself. Open Perplexity, type in the queries from the list above, and record what comes back. Here's what a manual tracking spreadsheet looks like after Planway runs their first 5 prompts:

| Prompt | Planway mentioned? | Planway cited? | Cited URL | Competitors mentioned |

|---|---|---|---|---|

| "Best PM tools for remote teams" | No | Yes | planway.com/blog/async-collab | Asana, Monday, ClickUp |

| "Planway vs Asana" | Yes | Yes | planway.com/compare/asana | Asana, Monday |

| "Asana alternatives" | Yes | No | n/a | Monday, ClickUp, Notion |

| "What is Planway?" | Yes | Yes | planway.com/about | n/a |

| "Best tool for sprint planning" | No | No | n/a | Jira, Linear, Shortcut |

Five prompts, five minutes of work. The picture is immediately useful: Planway shows up for brand and comparison queries but is invisible for category and use-case prompts. That's a clear content gap.

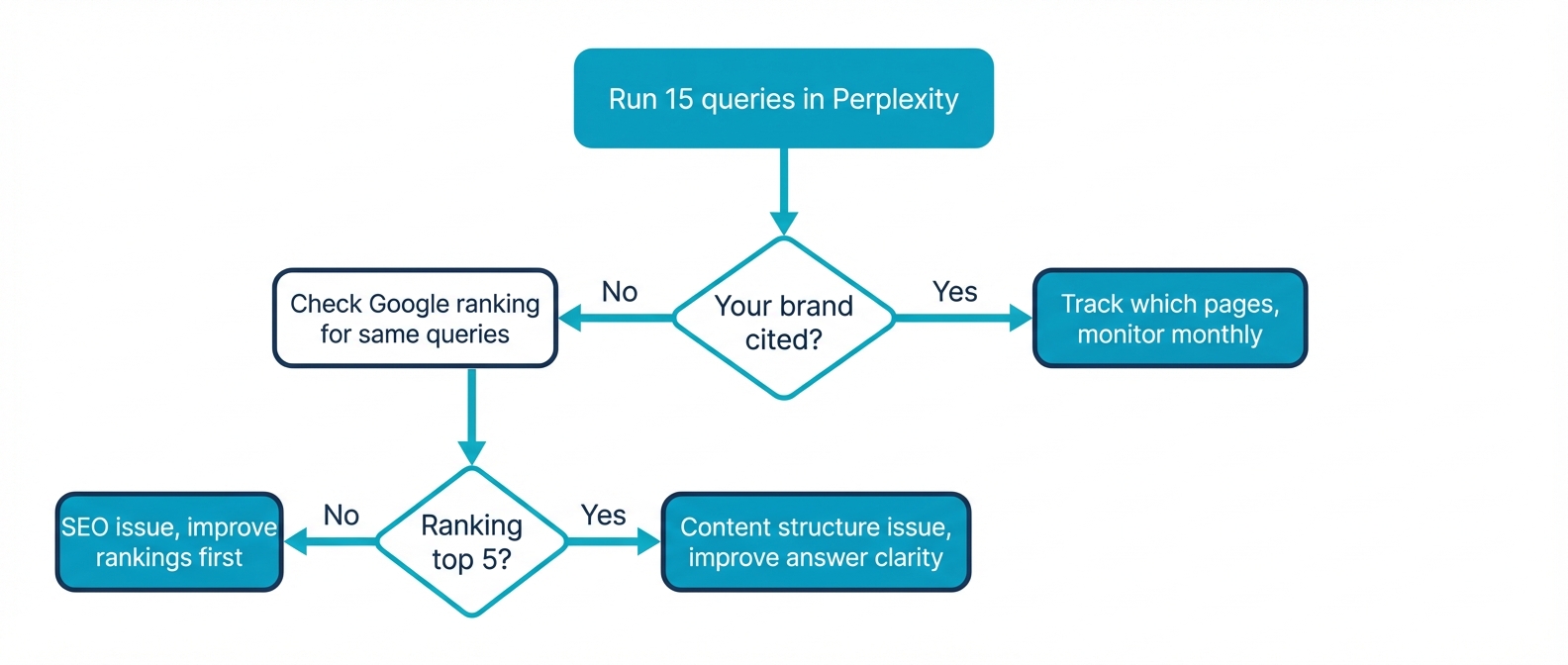

The problem is what happens next. Run this same exercise a week later and the results will be different. Perplexity's real-time crawling means answers shift as sources update. A competitor publishes a new comparison page and suddenly displaces you in "Asana alternatives." You won't know unless you're checking regularly.

And as soon as you want to track 30+ prompts across multiple competitors at weekly cadence, the math stops working. That's 100+ queries per month you'd need to run, record, and compare by hand. Manual tracking gives you the initial snapshot. Sustaining it requires automation.

Automated Tracking Options

Several tools now support Perplexity-specific tracking, but coverage varies significantly. When evaluating options, look for these capabilities:

- Perplexity as a distinct provider, not lumped into a generic "AI search" category

- Citation-level data: which specific URLs are being cited, not just brand mentions

- Historical trend tracking: how your visibility changes over time, not just point-in-time snapshots

- Prompt-level granularity: results broken down per prompt, not aggregated into a single score

- Competitor tracking: share-of-voice data within the same prompts

AI visibility platforms in this space include friction AI, Otterly, and Profound. Each approaches Perplexity tracking differently:

- friction AI tracks Perplexity as a separate provider with citation-level detail, prompt-by-prompt scoring, and built-in competitor benchmarking. Strongest on granularity and multi-platform coverage.

- Otterly offers AI search monitoring with Perplexity support, focused on brand mention tracking and share-of-voice trending. Good for teams that want a simpler dashboard.

- Profound provides AI visibility analytics with Perplexity data alongside other providers. Oriented toward enterprise teams with deeper reporting needs.

Whichever tool you evaluate, check whether it differentiates between a Perplexity citation (clickable, traffic-driving) and a ChatGPT mention (no referral link). Some platforms combine Perplexity data with other AI providers but don't surface Perplexity-specific citations, which defeats the purpose.

The Perplexity API is also an option for teams with engineering resources who want to build custom tracking pipelines.

Setting Up a Perplexity Tracking Workflow

Once you've chosen a tracking method, structure your workflow around the prompt categories from the Key Prompts to Monitor section above.

Choose Your Prompts

Start with 15-25 prompts distributed across all five categories. Prioritize the prompts where you expect your brand to appear, and a handful where you're not sure. The gaps are often more valuable than the confirmations.

Planway's initial tracking revealed a gap. They were invisible for use-case queries like "best tool for sprint planning." That wouldn't have surfaced tracking only branded and comparison prompts.

Set Your Tracking Frequency

Weekly is the minimum useful cadence for Perplexity. Because results are generated from real-time crawling, daily tracking captures more signal but weekly catches the meaningful trends. Avoid monthly: too much changes between snapshots.

Establish Baselines

Before making any content changes, run at least two weeks of tracking to establish your baseline. Note your current mention rate, citation frequency, which pages get cited, and your competitor share of voice. Without a baseline, you can't measure the impact of changes.

Monitor for Shifts

Watch for three types of movement. First, your brand appearing in prompts where it wasn't before (positive). Second, your brand disappearing from prompts where it was (negative). Third, competitors entering prompts where they weren't previously (competitive threat). Each requires a different response.

Turning Tracking Into Action

Tracking is only useful if it informs decisions. Here's how to connect your Perplexity data to content strategy, using Planway's results as an example.

If you're not being cited at all: Planway didn't appear for "best tool for sprint planning" because they had no content specifically about sprint workflows. Start with the fundamentals covered in our guide on how to appear in Perplexity AI. Your content may not be crawlable, specific enough, or structured in a way Perplexity favors.

If you're cited but not recommended: Planway's async collaboration blog post got cited for "best PM tools for remote teams," but the response recommended Asana, Monday, and ClickUp by name instead. The content was being found but wasn't persuasive enough to earn a recommendation. Review how competitors' cited pages are structured and what claims they make. Our Perplexity SEO guide covers the ranking signals that influence how you're positioned.

If competitors are dominating your category prompts: Focus on the citation sources. Which pages are competitors getting cited from? Often it's a single comparison page or data-backed article driving most of their visibility. Create or improve your equivalent content.

If your citations are concentrated on one page: Planway's "Planway vs Asana" page drove 60% of all their citations. That's a single point of failure. If a competitor publishes a stronger comparison, Planway's visibility drops overnight. Build supporting pages for adjacent queries to diversify your citation base.

The most important metric isn't any single data point. It's the trend. Consistent tracking over weeks and months reveals whether your content strategy is working in the context that increasingly matters: what AI tells people about your brand.

Frequently Asked Questions

What metrics should I track for Perplexity visibility?

Track four core metrics. First, brand mentions (whether Perplexity names your brand in the answer text). Second, citation frequency (how often Perplexity cites specific pages from your site). Third, competitor share of voice (how often competitors appear alongside you). Fourth, sentiment of recommendations (neutral description vs active recommendation). Together these give you a full picture of how Perplexity positions your brand.

How do I measure share of voice in Perplexity?

Run the same category and use-case queries across Perplexity for both your brand and named competitors. For each query, record who is mentioned, who is cited, and how each brand is positioned. Over 20-30 queries, share of voice is the percentage of answers where your brand appears versus competitors. Weekly tracking captures meaningful shifts.

How do I baseline Perplexity brand visibility?

Before making any content changes, run at least two weeks of tracking across 15-25 prompts distributed across brand, comparison, purchase-intent, alternative, and use-case query types. Record your mention rate, citation frequency, which pages get cited, and your competitor share of voice. The baseline is your reference point for measuring the impact of future content changes.

Can I track Perplexity citations in Google Analytics?

Yes, through referral traffic. In Google Analytics 4, filter Acquisition by source/medium containing "perplexity" to see when users clicked through from a Perplexity citation to your site. This reveals which pages get Perplexity traffic and which queries drive it. Growing perplexity.ai referral traffic is a direct signal your citations are increasing.

How often should I track Perplexity brand mentions?

Weekly is the minimum useful cadence because Perplexity runs on real-time web crawling, so results shift as sources update. Daily tracking captures more signal, but weekly catches meaningful trends. Avoid monthly tracking because too much changes between snapshots, and you'll miss competitive displacement.

How do I turn Perplexity tracking data into content decisions?

Match the tracking pattern to the action. If you are not cited for a query, the gap is crawlability, specificity, or structure. If you are cited but not recommended, review how competitors' cited pages are structured. If competitors dominate your category prompts, audit the specific pages driving their citations. If your citations concentrate on one page, diversify by building supporting pages for adjacent queries.