Honey was acquired by PayPal for $4 billion. Ask ChatGPT "What is Honey?" and it tells you about bees.

That's not a bug. It's a brand recognition problem, and it's happening to thousands of brands right now. Most of them have no idea.

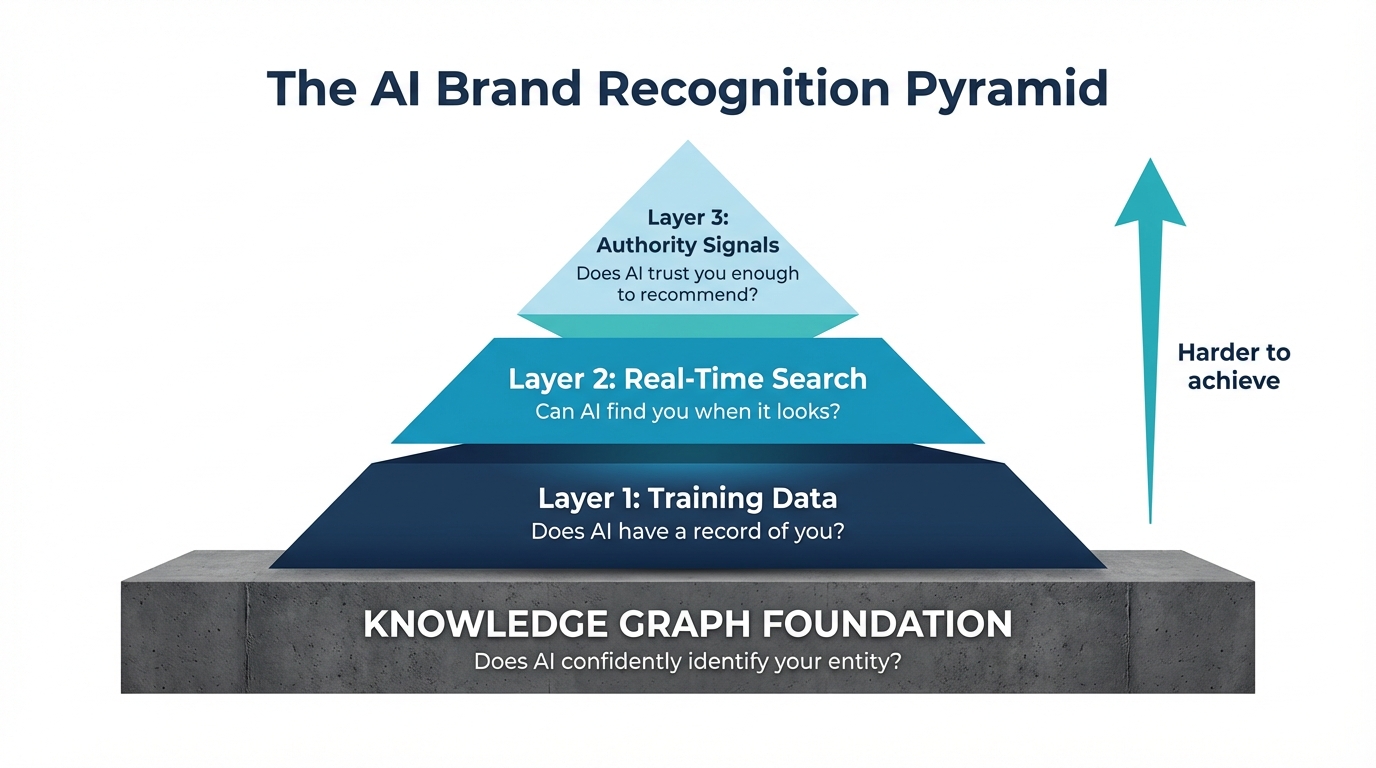

Here's why. AI brand recognition works like a pyramid. For the fundamentals, see What is AI Brand Recognition. Three layers, bottom to top, and your brand has to clear each one before it gets recommended to anyone. Most brands don't make it past the base.

The Foundation Beneath the Pyramid: Knowledge Graph Confidence

Before a brand can clear any layer of the pyramid, AI needs to confidently identify it as a distinct entity. This is Knowledge Graph confidence, and it's the foundation everything else sits on.

Google maintains a Knowledge Graph that maps relationships between entities: companies, people, products, places. Every entity gets a confidence score based on how much cross-referential evidence exists across the web. We tested this using Google's Knowledge Graph API across dozens of brands. The results are revealing:

| Brand | KG Confidence Score | What AI Does |

|---|---|---|

| Nike | 25,987 | States facts confidently. Always recommended. |

| Puma | 30,189 | Strong positive framing. Consistently included. |

| Semrush | 5,114 | Recognized as a corporation. Recommended in category. |

| Otterly AI | 1,797 | Identified correctly. Included in comparisons. |

| Writesonic | 1,134 | Recognized. Moderate confidence. |

| AthenaHQ | 217 | Known but descriptions are thin. |

| Peec AI | 12 | Barely exists. No description. AI guesses. |

| Profound | 0 | Not in Knowledge Graph at all. AI improvises. |

Brands scoring below 100 get the hedging treatment: "reportedly," "some users find," "claims to be." Brands above 500 get stated as fact. Above 2,000 and AI recommends them with conviction.

The gap between AthenaHQ at 217 and Profound at 0 is striking given they compete head-to-head in the same category. For context on where these two tools actually diverge on features, pricing, and fit, see our head-to-head of Profound and AthenaHQ.

The score isn't something you set directly. It's built from cross-referential signals: your Wikidata entry, Organization schema on your website, Google Business Profile, Bing Places listing, Crunchbase profile, and review platform presence. Each source that independently confirms the same facts about your brand increases the score.

If your Knowledge Graph confidence is low, everything in the pyramid above it becomes unreliable. Training data mentions might exist but AI can't confidently attribute them to you. Search results might include your pages but AI can't verify you're the right entity. Authority signals exist but AI discounts them because the entity isn't resolved.

Fix the foundation first. Everything else compounds from there.

Layer 1: Training Data (The Foundation)

The base of the pyramid is what the model already knows. Whatever it absorbed during training.

And here's the thing most people get wrong: LLMs are not trained on the entire internet. They're trained on a curated slice of it, filtered for quality and weighted by source authority. Getting into that slice is harder than it sounds.

How often your brand gets mentioned across the web matters a lot. Not on your own site. On other people's. One brand page sitting on your domain doesn't register. Hundreds of mentions scattered across different sites does.

Where those mentions live matters even more. TechCrunch, Forbes, a G2 comparison page. Those carry real weight. A random blog post? Not so much. Training pipelines favor high-authority sources because they tend to be more accurate.

Wikipedia is a big one, and it surprises people. It's among the most heavily weighted sources in LLM training data. If you don't have a Wikipedia page, you're at a disadvantage. And Wikipedia has strict notability rules. Most startups and mid-market companies simply don't qualify.

Then there's the question of who's saying what about you. Your own website says you're great. AI expects that and discounts it. What moves the needle is when others say it too. Reviews, case studies, news articles, analyst mentions, comparison pieces.

Every model also has a training data cutoff. If your brand launched or hit its stride after that date, the model has no record of you. You simply don't exist in its world.

And if your brand name happens to be a common English word? Good luck. Copper, Honey, Grain, Pitch, Notion. The model has to decide what you mean. Without overwhelming signal pointing to "Copper the CRM," it goes with copper the metal. Every time. That's exactly what happens with Honey. A $4 billion acquisition, and the model still thinks you're asking about something bees make.

Training data favors established brands with broad, authoritative web presence. If you're newer, smaller, or stuck with a generic name, you're already behind before the conversation starts.

Layer 2: Real-Time Search (The Backup)

Modern AI doesn't stop at training data. ChatGPT, Perplexity, Gemini, they can all search the web in real time to fill in gaps.

So if they can search, why are brands still invisible?

Because the search is way shallower than people assume.

We looked at a sample of ChatGPT responses with web search turned on. The pattern was clear: it typically ran 15+ searches per response but only grabbed about one source from each. Wide net, shallow pull. It takes the top result, extracts what it needs, and moves on.

Even responses that cited 40+ sources were averaging fewer than two results per query.

If you're not near the top for the queries that matter, AI probably won't find you. Even when it's actively looking. It's not going to page two. It's barely getting past the first result.

This is where SEO starts to matter for AI visibility. But ranking alone won't save you. Even if AI finds you, there's still one more filter to clear.

Layer 3: Authority Signals (The Final Filter)

Say AI finds you. You made it past training data. You showed up in a search. Now the model has to decide: do I actually recommend this brand?

It doesn't just list everything it finds. It weighs options. Models are built to favor credible, well-validated sources and ignore noise. Brands with weak authority signals get quietly dropped.

What actually builds authority in AI's eyes? Third-party validation. Reviews on G2, Capterra, Trustpilot. Showing up in "best of" lists and comparison articles. Getting cited by industry publications. That kind of presence tells the model: this brand is real, other people vouch for it.

Consistency matters too. If your messaging is one thing on your website and something slightly different on a review profile and something else again in a press mention, the model reads that as uncertainty. And uncertainty is risk. Risk means it picks someone else.

Recency is another factor. If the most recent thing the model can find about you is from 2023, you start to look like you might not be around anymore. And context has to match. A CRM showing up in a "best CRMs for small business" article is a strong signal. That same CRM mentioned once in a random forum thread? That's noise.

On the other hand, your own website saying you're number one in your category? AI doesn't care. A press release from two years ago, a few social media posts, paid placements with no editorial substance behind them? None of that moves the needle.

Bottom line: AI might find you, but if the signals are weak, it'll go with the safer pick. That may be your competitor.

Consistency: How You Know the Pyramid Is Working

You can have a strong Knowledge Graph entry, solid training data presence, good search rankings, and authoritative third-party coverage, and still see AI platforms tell different stories about your brand. ChatGPT calls you "a brand monitoring tool." Gemini says "an SEO analytics platform." Perplexity describes you as "an AI visibility tracker." All three know you. All three trust you enough to mention you. But they're drawing from different sources with slightly different descriptions, and the output is inconsistent.

Consistency across AI platforms isn't a fourth layer. It's the outcome of getting all three layers right, built on a solid Knowledge Graph foundation. When your entity signals, training data, and authority signals all reinforce the same narrative, AI platforms converge on the same description. When any layer has contradictions (your G2 profile says one thing, your homepage says another, your Crunchbase says a third), the models each pick a different source and the output diverges.

This is measurable. Run the same category query across ChatGPT, Gemini, Perplexity, and Claude. If they describe your brand consistently, in the same category, with similar framing, your pyramid is working. If each platform tells a different story, one or more layers has a contradiction that needs fixing.

The most common cause of inconsistency is mismatched descriptions across platforms. Your homepage says "AI visibility platform." G2 says "brand monitoring software." A press release says "AEO analytics tool." Each description is technically correct, but the inconsistency gives AI models permission to pick whichever framing they encounter first. The fix is boring but effective: choose one canonical description and use it everywhere.

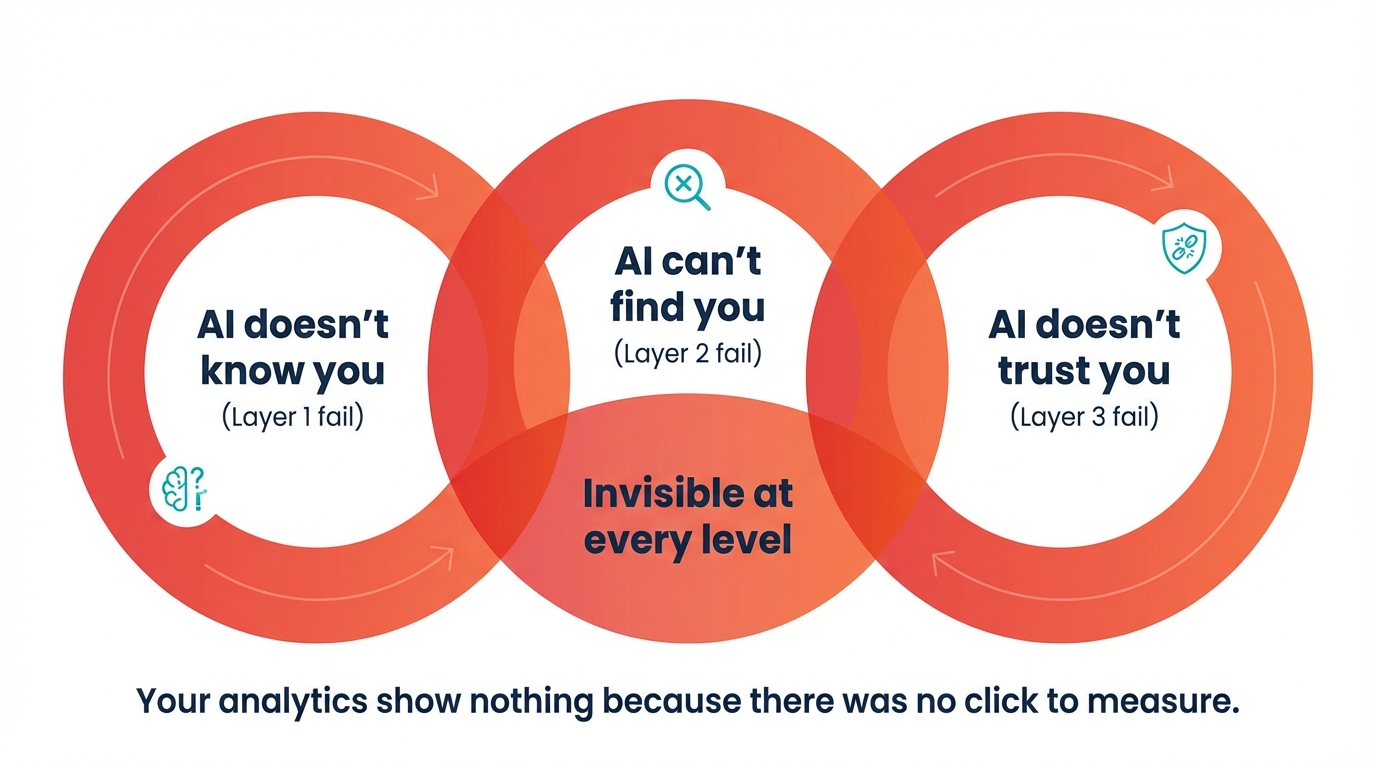

The Triple Penalty

This is what makes it feel so unfair for newer and smaller brands. You're not just losing at one layer. You're losing at all three.

AI doesn't know you. AI can't find you. And even if it could, it doesn't trust you enough to recommend you.

You're invisible at every level. And nothing in your analytics is going to tell you that.

What About SEO?

The obvious reaction to all of this: "So I need better SEO?"

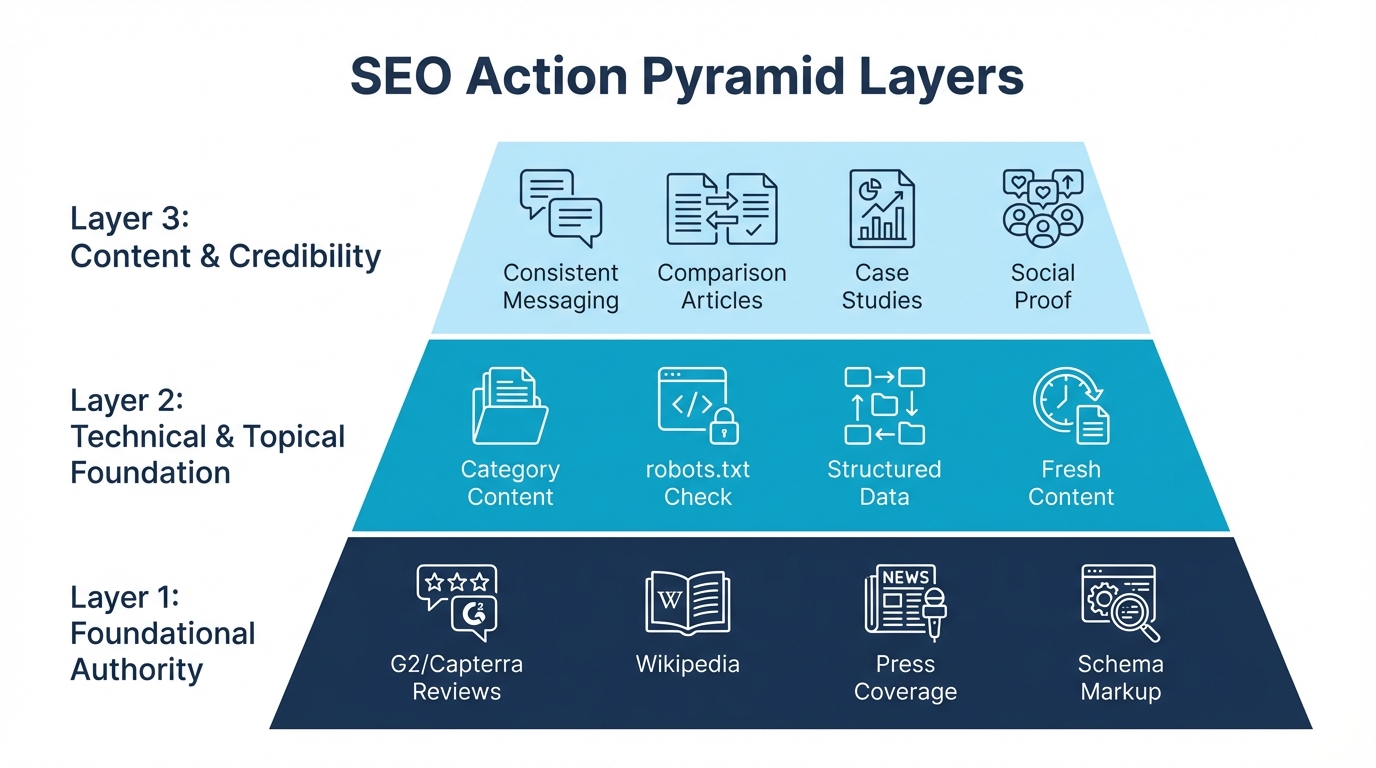

SEO helps. It gets you ranking for keywords, which matters for Layer 2. It builds backlinks that act as authority signals, which helps with Layer 3. And over time, it grows your web presence, which feeds into future training data.

But there are things SEO simply can't fix.

It can't fix training data lag. Whatever you do today won't change what's already baked into the model. Training data refreshes happen on the provider's schedule, not yours. Could be months.

It can't solve the common word problem. You can rank #1 for "Copper CRM" and ChatGPT will still default to the metal when someone just asks "What is Copper?"

It can't guarantee AI recommends you. You can rank well on Google and still not be the brand AI mentions. Different system, different logic.

And it can't tell you whether any of this is working. SEO tools show your Google rankings. They don't show what happens when someone asks an AI chatbot about your category.

SEO is an input. AI visibility is the output. You can nail the first and still be invisible in the second, and you'd never know.

For a focused guide on each tactic, see How to Improve Your Brand Recognition in AI.

What Actually Works

If your brand is struggling with AI recognition, there are real things you can do. They map directly to the three layers, and none of them require you to wait around for the next training data refresh.

Start with the Knowledge Graph foundation. Create a Wikidata entry for your company with your official name, category, founding date, and website. Add Organization schema to your homepage with sameAs links pointing to Wikidata, Crunchbase, LinkedIn, and G2. Claim your Google Business Profile and Bing Places listing. These four actions take under two hours combined and establish the entity base that everything else builds on.

Then build your web presence. Get on review platforms. Claim your G2, Capterra, Trustpilot profiles. Fill them out, collect reviews, stay active on them. These platforms are heavily represented in training data. Then work on earning editorial coverage. Pitch journalists, write guest posts for industry publications, get included in roundups and comparison pieces. One mention in TechCrunch does more than fifty posts on your own blog. If you're not Wikipedia-eligible today, start working toward it. Notability requires sustained coverage in independent, reliable sources. It's a long game, but it's worth playing. And create content that other people actually want to reference. Original research, benchmarks, data reports. When other sites cite your work, that's the kind of third-party signal training data rewards.

For search visibility, focus on category queries, not just branded terms. "Best [your category]," "top [your category] tools," "[competitor] alternatives." Those are the exact queries AI runs when someone asks a buying question. Check your robots.txt while you're at it. Make sure you're not accidentally blocking GPTBot, ClaudeBot, PerplexityBot, or Google-Extended. Some brands do this without realizing. Keep your key pages updated. Recency matters for both traditional search and AI retrieval. Gartner predicts search engine volume will drop 25% by 2026 as AI answers absorb more queries. And use structured data (see Schema.org documentation). JSON-LD schema markup like Organization, Product, and FAQ helps AI models parse your content more accurately.

For authority, the biggest thing is consistency. Same positioning, same value props, same messaging everywhere. Your site, review profiles, social presence, any third-party coverage. Inconsistency kills trust with AI. Get into comparison content. "X vs Y" articles, "best tools for [use case]" lists, analyst reports. That's where AI looks when it's deciding who to recommend. Invest in real case studies with named customers and specific outcomes, not generic testimonials. And stay active. Brands that go quiet for a few months start to look dead.

And monitor what's actually happening. Ask ChatGPT, Perplexity, Claude, and Gemini about your category. Regularly. See who gets recommended. See if you even come up. Track it over time, because a single check only tells you where you are today. And compare across models. Different models have different training data and different retrieval behavior. You might be visible in one and completely absent from another.

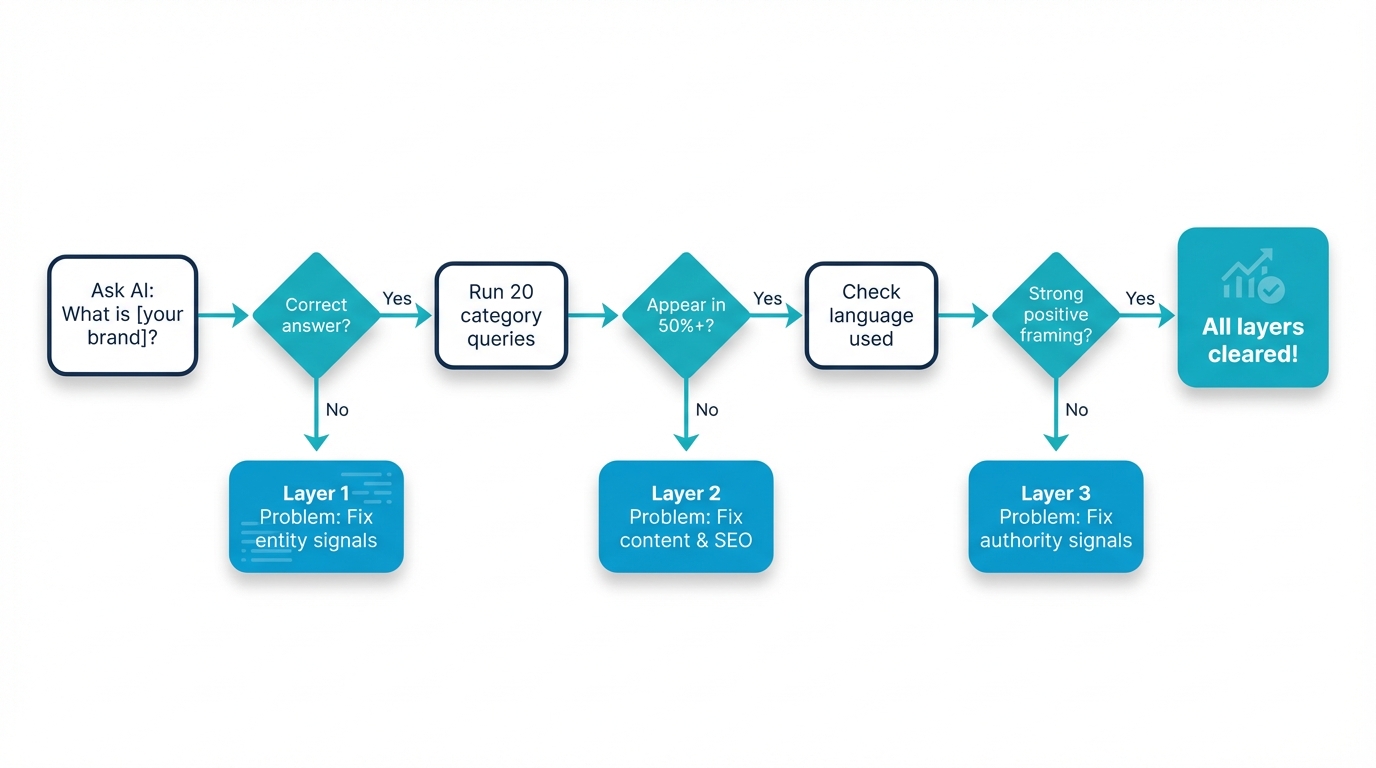

How to Diagnose Which Layer You're Stuck At

The pyramid is only useful if you know where you stand. Here's a diagnostic you can run in 30 minutes.

Foundation Diagnostic: Knowledge Graph

Before checking the three layers, check the foundation. Search for your brand on Google's Knowledge Graph Search API (or simply Google your brand name and look for a Knowledge Panel on the right side of the results).

If a Knowledge Panel appears with your correct name, category, and description, your KG foundation is solid. If no panel appears, or if it shows the wrong entity (a common problem for brands with common-word names), your foundation needs work before anything else.

Foundation checklist: Do you have a Wikidata entry with your Q-ID? Does your homepage have Organization schema with sameAs links to Wikidata, Crunchbase, and LinkedIn? Do you have a verified Google Business Profile? Is your Bing Places listing active? If most answers are no, start here. No amount of content or authority building will compensate for an unresolved entity.

Layer 1 Diagnostic: Training Data

Open ChatGPT, Claude, and Gemini in fresh sessions (no prior context). Ask each: "What is [your brand]?"

If the response is accurate, detailed, and places you in the right category, your training data signal is strong. If it's vague, wrong, or confused with another entity, you have a Layer 1 problem.

Layer 1 checklist: Do you have a Wikipedia page? Are you mentioned on 50+ authoritative third-party domains? Has your brand been covered by industry publications? Do your G2/Capterra profiles have 20+ reviews? If most answers are no, Layer 1 is your bottleneck and everything else is premature.

Layer 2 Diagnostic: Real-Time Search

Ask ChatGPT (with browsing enabled) and Perplexity 15-20 category-level queries: "best [your category] tools," "top [your category] for [use case]," "how to solve [problem in your space]."

If you appear in most results: Layer 2 is cleared. If you appear sporadically or not at all: check your Google rankings for these queries. If you're not ranking in the top 5, AI search won't find you either. This is where SEO directly and measurably impacts AI visibility.

Layer 2 checklist: Are you ranking top 5 on Google for 10+ category queries? Do you have content covering at least 15 distinct subtopics in your category? Are your pages structured with clear headings, factual claims, and direct answers that models can extract?

Layer 3 Diagnostic: Authority Signals

For queries where you do appear, examine the language carefully. Is your brand described as "a solid option," "a popular choice," or "a leading platform"? The strength of the language directly reflects your authority signals.

Watch for hedging: Phrases like "some users find" or "it may be worth considering" signal low authority confidence. Strong authority produces language like "widely regarded," "consistently recommended," or "a top choice."

Layer 3 checklist: Do you have 50+ reviews on G2/Capterra? Have you been featured in analyst reports? Is your messaging consistent across website, reviews, press, and partner pages? Do third-party sources independently validate your positioning?

Reading Your Results

Failing the Foundation diagnostic means all other optimization is premature. Fix your Knowledge Graph presence first: Wikidata entry, Organization schema, Google Business Profile, Bing Places. Without a resolved entity, Layers 1-3 produce inconsistent results.\n\nFailing Layer 1 (with a solid foundation) means your web presence is too thin. Fix entity recognition and build third-party coverage. Without it, SEO improvements and content investments get wasted on a brand the model can't reliably identify.

Passing Layer 1 but failing Layer 2 means your content and SEO strategy need work. You exist in the model's knowledge, but you're not discoverable through real-time search when it matters.

Passing Layers 1 and 2 but underperforming at Layer 3 means you need more third-party validation, review depth, and messaging consistency. You're showing up but not being chosen.

Measuring Progress Over Time

Run the diagnostic monthly. Track four numbers:

Knowledge Graph confidence score: Query Google's Knowledge Graph Search API for your brand name. Track the resultScore over time. Target: appearing with a score above 100 within 3 months. Above 500 means AI platforms will describe you with confident language rather than hedging.

Track three additional numbers:

Entity accuracy rate: Percentage of direct brand queries where AI correctly identifies you across all platforms. This measures Layer 1. Target: 90%+ correct across ChatGPT, Claude, and Gemini.

Category mention rate: Percentage of category-level queries where your brand appears. This measures Layer 2. Calculate separately per platform. Target: 40%+ for your primary category.

Positive framing rate: Percentage of appearances where you're framed with strong positive language. This measures Layer 3. Target: trending upward month-over-month.

Three months of data reveals real patterns. A single snapshot tells you where you are. Three snapshots tell you whether your efforts are working and at which layer.

Related Guides: AI Brand Recognition

The pyramid gives you the framework. These guides go deeper on each aspect:

The fundamentals: What Is AI Brand Recognition? covers the definition and why it matters for businesses navigating AI-driven discovery.

Practical improvement: How to Improve Your AI Brand Recognition: A Step-by-Step Guide (2026) provides a tactical roadmap for strengthening your signal at each layer of the pyramid.

Why brands get confused: Why AI Models Get Brands Wrong examines the technical mechanisms behind brand misidentification and entity confusion in LLMs.

Entity theory applied: Entity SEO for AI: How Entity Theory Drives AI Visibility explores how knowledge graph concepts translate to practical AI visibility work.

Tools landscape: AI visibility tools compared across 4 categories covers the dedicated AEO platforms, SEO suites with AI features, brand monitoring tools, and DIY approaches available today.

The Window Is Open

Most brands aren't thinking about any of this yet.

That's the opportunity.

The ones that start now, building authority on review platforms, earning real editorial coverage, making their sites AI-friendly, and actually paying attention to how AI sees them, are going to compound that advantage over time. Not because any one thing is a silver bullet, but because this kind of presence stacks. And once you've built it, it's hard for anyone to catch up.

The brands that wait until AI search goes fully mainstream will be starting from zero in a channel their competitors have been quietly building in for years.

You don't need to overhaul everything. You just need to add a layer to what you're already doing. And you need to start watching a channel that most of your competitors are completely ignoring.

This is what we built friction AI to solve. We monitor how your brand shows up across ChatGPT, Perplexity, Gemini, Claude, and Grok, tracking visibility, sentiment, purchase intent, and brand recognition across all of them. Because the first step to fixing AI invisibility is seeing it.