Your brand has a reputation in AI that nobody on your team is tracking.

Every day, ChatGPT, Perplexity, Gemini, and Google AI Overviews answer thousands of questions about your industry. Some of those answers mention your brand. Some recommend your competitors instead. Some get basic facts about your company wrong. And unless you have a monitoring system in place, you have no idea which of these is happening.

This is not a theoretical problem. ChatGPT has 900M weekly active users as of OpenAI's February 2026 announcement, and 80% of consumers now use AI-generated results in their search process. When Gartner predicts a 25% drop in traditional search volume by 2026, the implication is clear. AI answers are becoming a primary channel for brand perception. You need visibility into what that channel is saying.

The risk is not hypothetical. Harvard Law School's Corporate Governance Forum found that 191 S&P 500 companies disclosed AI reputation risks in 2025, up from 31 in 2023, a 350% year-over-year increase. And the risk varies by platform: BrightEdge data reveals that Google AI Overviews are 44% more likely to criticize brands than ChatGPT. If you are only monitoring ChatGPT, you are seeing the friendlier version of your brand's AI presence.

AI brand monitoring is the practice of systematically tracking how AI models mention, describe, recommend, and cite your brand across platforms. It is to AI what social listening is to social media, but with fundamentally different mechanics and challenges.

Why Traditional Monitoring Misses AI

Your existing brand monitoring stack almost certainly has a blind spot. Social listening tools track Twitter, Reddit, and news mentions. SEO tools track Google rankings and backlinks. Neither tracks what happens inside an AI-generated answer.

When a potential customer asks Perplexity "what's the best project management tool for remote teams?" and your brand doesn't appear, no analytics platform will flag it. When ChatGPT describes your product incorrectly to a prospect, your social listening dashboard stays silent. When a competitor starts appearing in AI recommendations for queries you should own, your SEO tool won't notice.

AI-generated answers exist in a space between search and social. They synthesize information from the web but present it as a single, authoritative response. The user never visits your website, never clicks a link, and never leaves a trackable footprint. The entire brand interaction happens inside a conversation you can't see unless you're actively monitoring it.

What AI Brand Monitoring Tracks

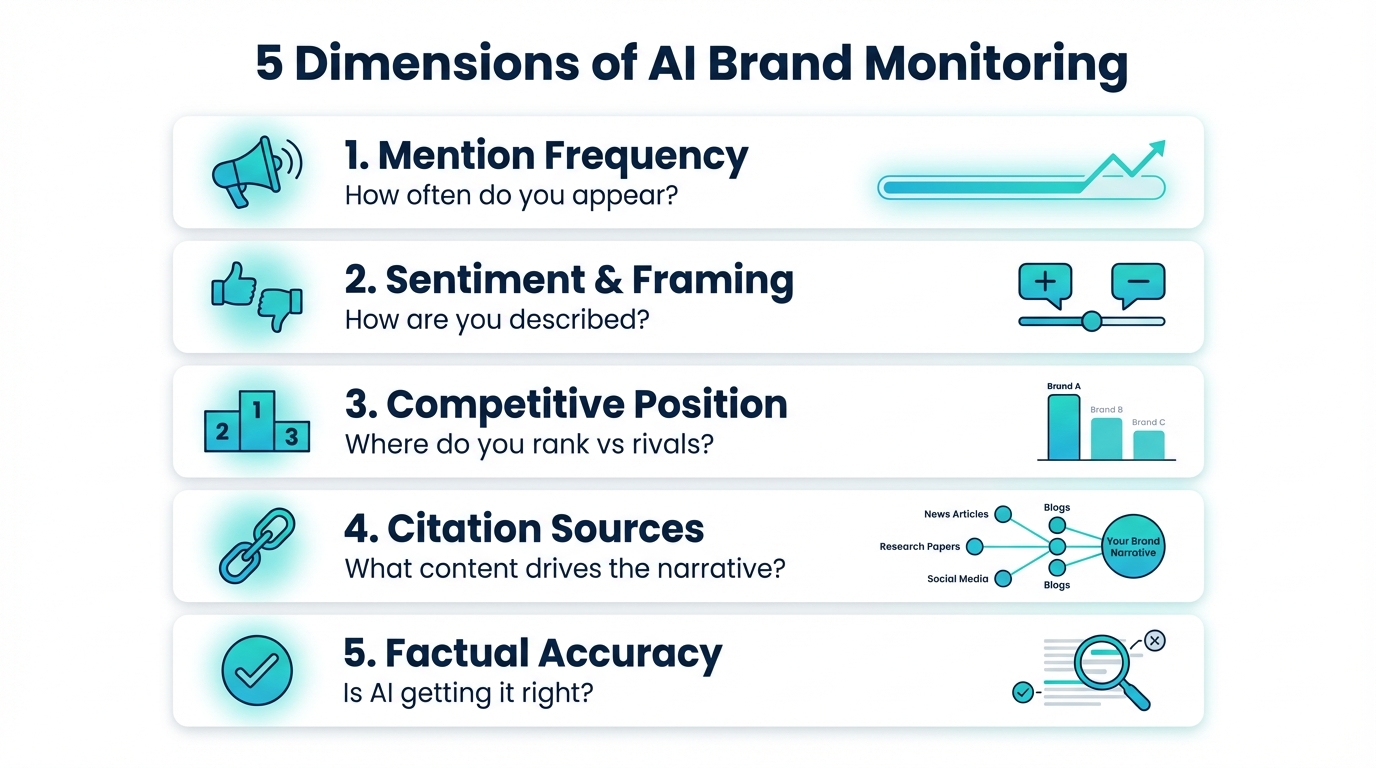

A comprehensive monitoring practice covers five dimensions of your brand's AI presence.

Mention frequency. How often does your brand appear when users ask questions in your category? SparkToro's research found less than a 1-in-100 chance that any two AI responses will contain the same brand list, which means you need volume to establish patterns. A single query tells you nothing. Fifty queries across different phrasings tell you whether your brand has consistent presence or appears randomly.

Sentiment and framing. What does AI say about you when it does mention your brand? There is a meaningful difference between "Brand X is a popular option" and "Brand X is widely regarded as the leader in this category." Monitoring sentiment means tracking not just whether you appear, but how you're described. Are you positioned as premium or budget? Innovative or established? A recommendation or a cautionary mention?

Competitive position. AI answers don't exist in isolation. When a model recommends your brand, it typically names 3-5 others alongside you. Tracking your competitive position means knowing which brands appear with you, how often competitors show up without you, and whether your relative position is improving or declining over time.

Citation sources. When AI models cite sources to support their answers, those citations reveal what content is driving the narrative about your brand. An analysis of 23,000 LLM citations found that 48% came from earned media while only 23% came from owned content. Knowing which sources AI pulls from when discussing your brand tells you where to focus your content and PR efforts.

Factual accuracy. AI models get facts wrong. They confuse product features, misattribute capabilities, cite outdated pricing, and sometimes blend your brand's attributes with a competitor's. Monitoring for accuracy means catching these errors before your customers encounter them.

How to Start Monitoring Today

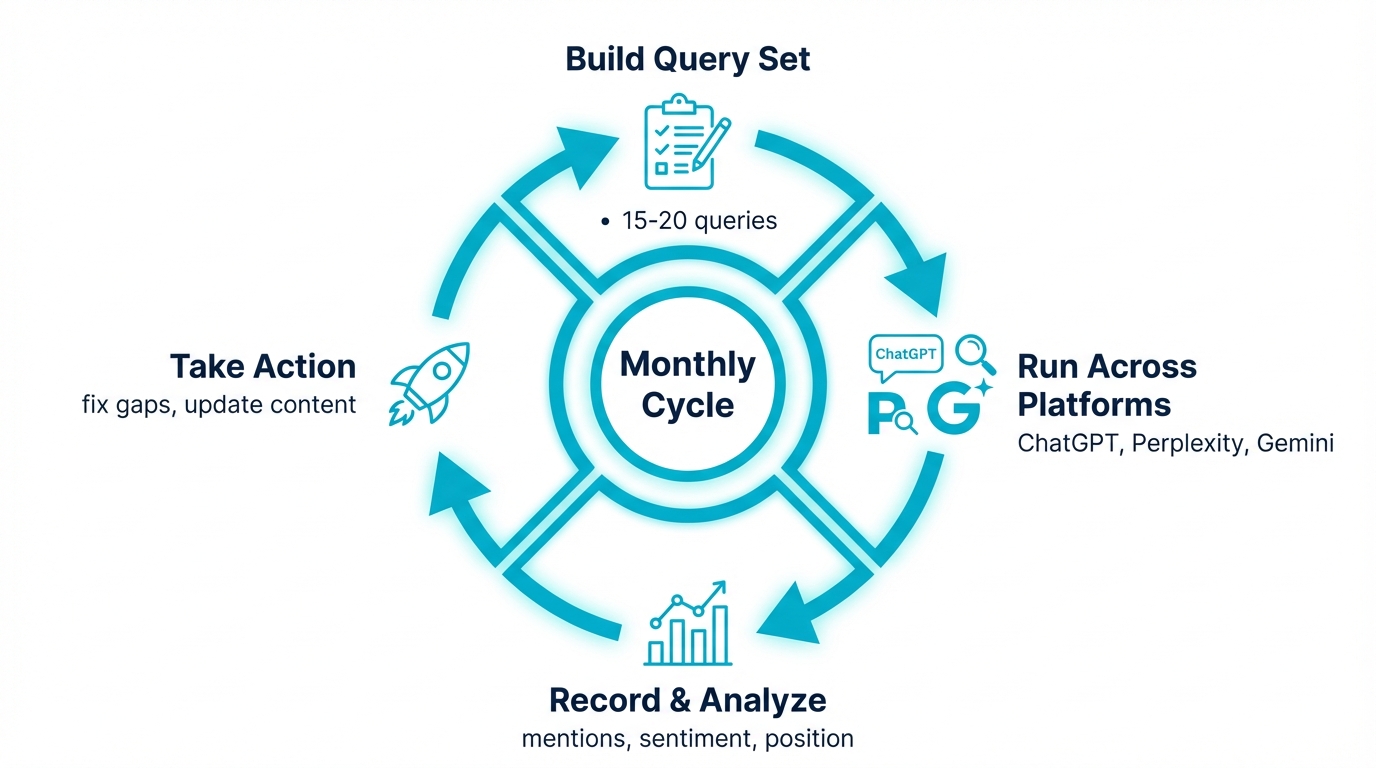

You don't need specialized tooling to begin. Start with a manual process and systematize it over time.

Build your query set. Create 15-20 queries that represent how customers ask about your category. Include:

- Category queries: "best [your category] tools" and "top [your category] for [use case]"

- Brand queries: "what is [your brand]" and "[your brand] reviews"

- Comparison queries: "[your brand] vs [competitor]"

- Problem queries: "[customer pain point] solutions"

- Purchase queries: "which [product type] should I buy for [need]"

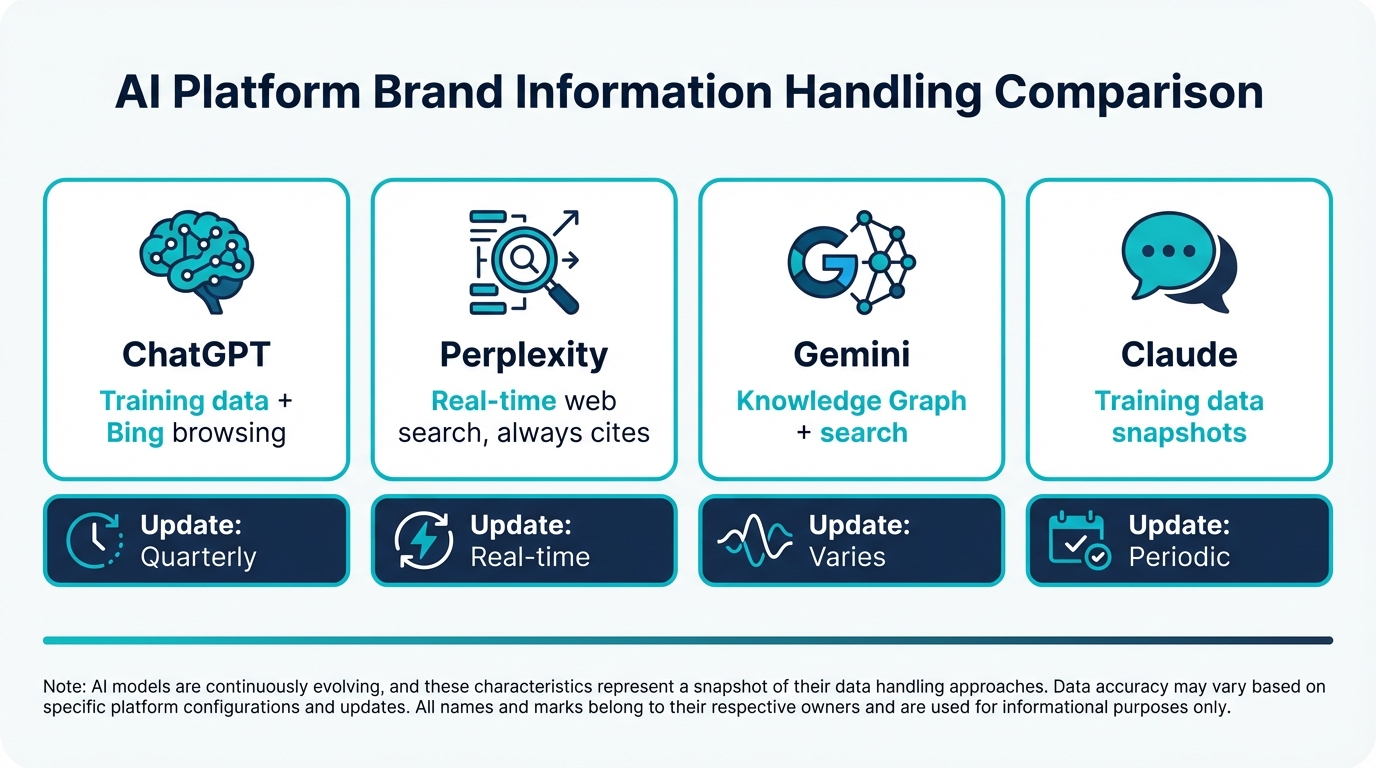

Choose your platforms. At minimum, monitor ChatGPT, Perplexity, and Google Gemini. These three cover the majority of AI-generated brand interactions. Add Google AI Overviews if your category triggers them frequently, and Claude if your audience skews technical.

Given BrightEdge's finding that ChatGPT and Google AI disagree on brand recommendations 62% of the time, single-platform monitoring will mislead you. Cross-platform tracking is not optional.

Set your cadence. Run your full query set at least monthly. For high-stakes categories (enterprise SaaS, financial services, healthcare), biweekly or weekly cadence is better. AI models update their knowledge and behavior more frequently than search engines update rankings, so monthly snapshots can miss important shifts. For B2B brands, the urgency is even higher: Forrester's 2026 Buyer Insights report identifies generative AI searches as the starting point for B2B buyers, upending traditional discovery patterns as leaders face mounting pressure to justify every marketing dollar.

Record everything. For each query and platform, record:

- Whether your brand appeared

- Your position in the recommendation list (first mentioned, second, etc.)

- How you were described (sentiment and framing)

- Which competitors appeared alongside you

- What sources the AI cited

- Any factual errors about your brand

Store this in a spreadsheet or database so you can track trends over time. A single snapshot is informative. Three months of data reveals patterns.

The Metrics That Matter

Not every data point deserves executive attention. Focus your monitoring on metrics that connect to business outcomes.

AI Share of Voice: Your mention frequency divided by total mentions across you and your top competitors. This is the single most important metric for understanding your competitive position in AI answers.

Mention Rate: The percentage of relevant queries where your brand appears across all platforms. Track this monthly to see whether your AI presence is growing or shrinking.

Sentiment Score: A consistent measure of how positively or negatively AI describes your brand. Watch for sudden shifts that might indicate a new source of negative content entering AI training data or retrieval pipelines.

Accuracy Rate: The percentage of AI responses about your brand that contain correct, current information. Persistent inaccuracies signal a content gap you need to fill at the source.

Citation Coverage: How often your own content is cited versus competitor content or third-party sources. Being cited in AI Overviews correlates with 35% more organic clicks, so citation coverage directly impacts traffic.

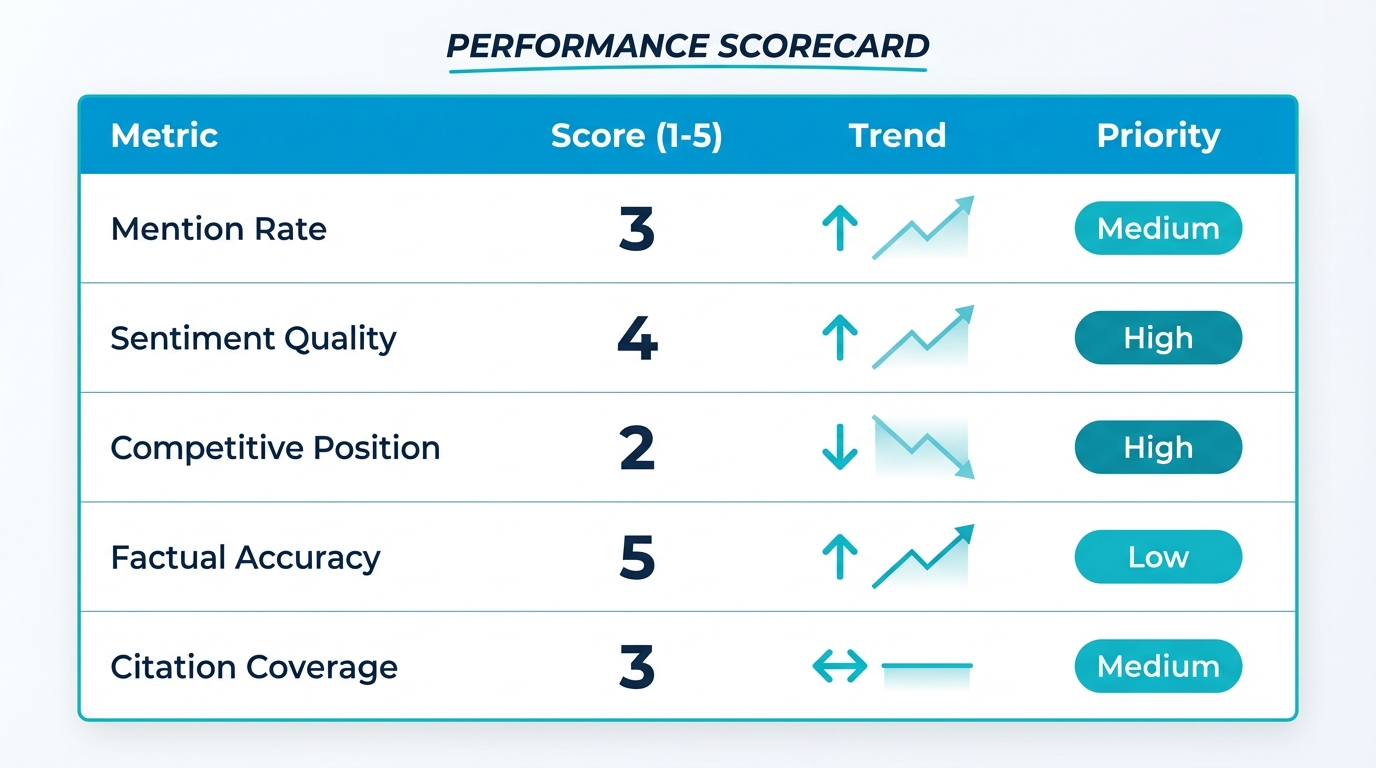

Building Your AI Brand Monitoring Scorecard

Knowing which metrics to track is one thing. Turning them into a repeatable reporting framework is where most teams stall. Here is a scorecard template you can use starting this week.

For each monitoring cycle (weekly or biweekly), score your brand across five dimensions on a 1-5 scale:

Mention Rate (1-5): What percentage of relevant category queries include your brand? A score of 1 means you appear in fewer than 10% of queries. A 5 means 50%+. Track this across ChatGPT, Perplexity, and Gemini separately, since BrightEdge data shows 62% disagreement between platforms.

Sentiment Quality (1-5): When you are mentioned, how are you framed? A score of 1 means neutral or cautious language ("some users report"). A 5 means strong positive positioning ("widely regarded as a leader"). Sentiment is the difference between being listed and being recommended.

Competitive Position (1-5): Where do you rank relative to competitors in AI responses? A 1 means you consistently appear after 3+ competitors. A 5 means you are typically mentioned first. Position in an AI answer matters just as it does in search results.

Factual Accuracy (1-5): What percentage of AI responses about your brand are factually correct? A 1 means persistent errors (wrong pricing, outdated features, entity confusion). A 5 means consistently accurate representation. Errors erode trust faster in AI than in search, because users treat AI answers as authoritative.

Citation Coverage (1-5): How often is your owned content cited versus competitor or third-party content? A 1 means your content is rarely cited. A 5 means your content is the primary source AI references when discussing your category.

The scorecard gives you two things: a snapshot of where you stand today, and a trend line that shows whether your efforts are working over time. Run it monthly and share with leadership. A three-month trend is worth more than any single data point.

Common Monitoring Mistakes to Avoid

After working with dozens of brands setting up their AI monitoring practices, a few failure patterns show up repeatedly.

Single-platform monitoring: Running queries only on ChatGPT and assuming the results apply everywhere. They do not. Each model has different training data, different retrieval pipelines, and different ranking behavior. ChatGPT might love your brand while Google AI Overviews ignores it. Cross-platform monitoring is not a nice-to-have.

Snapshot-only analysis: Running a monitoring cycle once, documenting the results, and not following up. AI models update continuously. A brand that appeared in January might disappear in February because a competitor published better content or the model updated its retrieval index. Monitoring is a practice, not an audit.

Ignoring the framing: Counting mentions without analyzing how you are described. A brand that appears in every answer but is consistently positioned as "the budget option" or "a smaller player" has an influence problem that mention counts will never surface.

AI Brand Monitoring Tools: Feature Comparison

| Dimension | Otterly AI | Profound | friction AI | Manual Monitoring |

|---|---|---|---|---|

| Platform coverage | ChatGPT, Perplexity, Gemini, Copilot + add-ons | 10+ including ChatGPT, Perplexity, Gemini, Copilot, Grok | ChatGPT, Perplexity, Claude, Gemini | Limited by time (typically 2-3 platforms) |

| Sentiment tracking | Basic sentiment indicators | Sentiment analysis with benchmarks | Per-prompt sentiment breakdown | Manual classification (subjective) |

| Citation tracking | Citation monitoring | Citation analytics with source types | Source attribution per response | Manual URL checking in Perplexity |

| Competitive analysis | Competitor tracking | Competitive benchmarking | Side-by-side brand comparison | Spreadsheet-based tracking |

| Alert frequency | Configurable alerts | Real-time monitoring | Continuous monitoring | Depends on checking cadence |

| Starting price | $29/mo | Sales required | $69/mo | Free (your time) |

| Each tool approaches monitoring differently. The right choice depends on your team size, budget, and which platforms matter most for your audience. |

Setting Up Your First AI Brand Monitor

If you've never tracked your brand in AI before, here's how to start in under an hour:

Step 1: Pick your platforms. Start with ChatGPT and Perplexity. These two cover the largest share of AI-generated brand interactions, and Perplexity's transparent citations make it the easiest to monitor manually.

Step 2: Write 10 test prompts. Include category queries ("best [your category] tools"), comparison queries ("[your brand] vs [competitor]"), and direct queries ("what is [your brand]?"). These should mirror what your potential customers actually ask.

Step 3: Run and record. Open fresh sessions in both platforms. Run each prompt. Record: did your brand appear? How was it described? Which competitors appeared? What sources were cited?

Step 4: Score your baseline. Calculate your mention rate (mentions / total prompts) and note the sentiment. This is your starting point. Repeat monthly to track progress.

Step 5: Decide on tooling. If you need to track more than 20 prompts across more than 2 platforms, manual monitoring stops scaling. That's when purpose-built tools like friction AI start saving time.

When to Invest in Tooling

Manual monitoring works for establishing a baseline and understanding the landscape. It stops scaling when:

- Your query set exceeds 50 queries

- You need to track more than 3 competitors

- Leadership wants regular reporting with trend data

- You need to detect changes between monitoring cycles

- Multiple team members need access to the data

At that point, purpose-built AI monitoring tools save significant time and provide the consistency that manual processes can't match. For guidance on evaluating tools, see our guide on AI brand monitoring tools: what to look for.

Building a Monitoring Practice, Not Just a Task

The biggest mistake teams make is treating AI brand monitoring as a one-time audit. You check what ChatGPT says, note the results, and move on. That approach misses the entire point.

AI models update continuously. New content enters training data. Retrieval pipelines index fresh pages. Competitor actions shift the landscape. A monitoring practice means building a recurring workflow that tracks changes over time and triggers action when something shifts.

The practice has three components:

- Collection: Running queries and recording results on a fixed cadence

- Analysis: Comparing results against previous periods to identify trends, anomalies, and opportunities

- Response: Taking action on findings, whether that means publishing content to correct misinformation, updating structured data to improve entity recognition, or escalating competitive threats

For how to build this into your marketing team's workflow, see our guide on setting up AI brand monitoring for a marketing team.

Frequently Asked Questions

What is AI brand monitoring?

AI brand monitoring is the practice of tracking how AI models (ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews) describe, cite, and recommend your brand when users ask about your category. It measures AI-generated outputs rather than traditional search rankings or social mentions. Core signals: visibility rate, citation frequency, sentiment framing, and competitor share of voice.

How do I set up AI brand monitoring?

Four steps. First, define 15-25 category queries covering brand-direct, comparison, alternative, and use-case intents. Second, run them monthly across the 4 major AI platforms. Third, log results in a structured format (appearance, citation, sentiment, competitor context). Fourth, review trend data after 3 months to spot patterns. Automated platforms handle steps 2-4 at scale.

What should I monitor in AI brand tracking?

Visibility (does your brand appear?), citations (does AI link to your specific pages?), sentiment (favorable, neutral, unfavorable framing), share of voice (how often you appear vs competitors on the same queries), and platform variance (do the 4 models agree or disagree about your brand?). Each signal answers a different question.

How often should I review monitoring data?

Monthly for executive review and trend analysis. Weekly if you're actively managing a competitive threat or post-launch measurement. Quarterly if you're a stable incumbent with slow-moving category dynamics. The key is consistency: same prompt set, same platforms, same cadence. Irregular snapshots generate noise, not signal.

What KPIs matter for AI brand monitoring?

Five primary KPIs. First, visibility rate (percent of queries where you appear). Second, citation rate (percent with your domain as source). Third, competitor share of voice gap (your appearance rate vs nearest competitor). Fourth, sentiment score (percent positive vs neutral vs negative framing). Fifth, platform consistency (variance across ChatGPT, Claude, Gemini, Perplexity). Track all five monthly.

Related Guides: Go Deeper on AI Brand Monitoring

This guide covers the foundations. Each aspect of AI brand monitoring has its own dedicated guide for teams ready to go deeper:

Choosing your tools: Not all monitoring platforms are built the same. Our guide to 5 Best AI Brand Monitoring Tools in 2026 breaks down what to evaluate, from platform coverage to alert capabilities, with side-by-side feature comparisons.

Setting up your team's workflow: Monitoring only works if it's embedded in your team's existing process. How to Set Up AI Brand Monitoring for Marketing Teams walks through ownership, cadence, and integration with your current stack.

How AI monitoring differs from social listening: If you're coming from a social listening background, AI Brand Monitoring vs Social Listening: What's Different in 2026 explains why the mechanics, metrics, and required tooling are fundamentally different.

Catching issues early: For a proactive approach to reputation risk in AI answers, see How to Catch AI Reputation Issues Before They Spread.

Tracking sentiment shifts: To understand not just whether you're mentioned but how you're described, see How to Track AI Sentiment Changes Over Time.

Monitoring competitors: For the competitive intelligence angle, see How to Monitor Competitor Mentions in AI Answers.