ChatGPT brand recommendations are the specific brands that ChatGPT mentions, endorses, or suggests when users ask for product and service advice. These recommendations shape purchasing decisions for millions of users daily, and understanding which brands appear (and why) gives you a concrete blueprint for improving your own AI visibility.

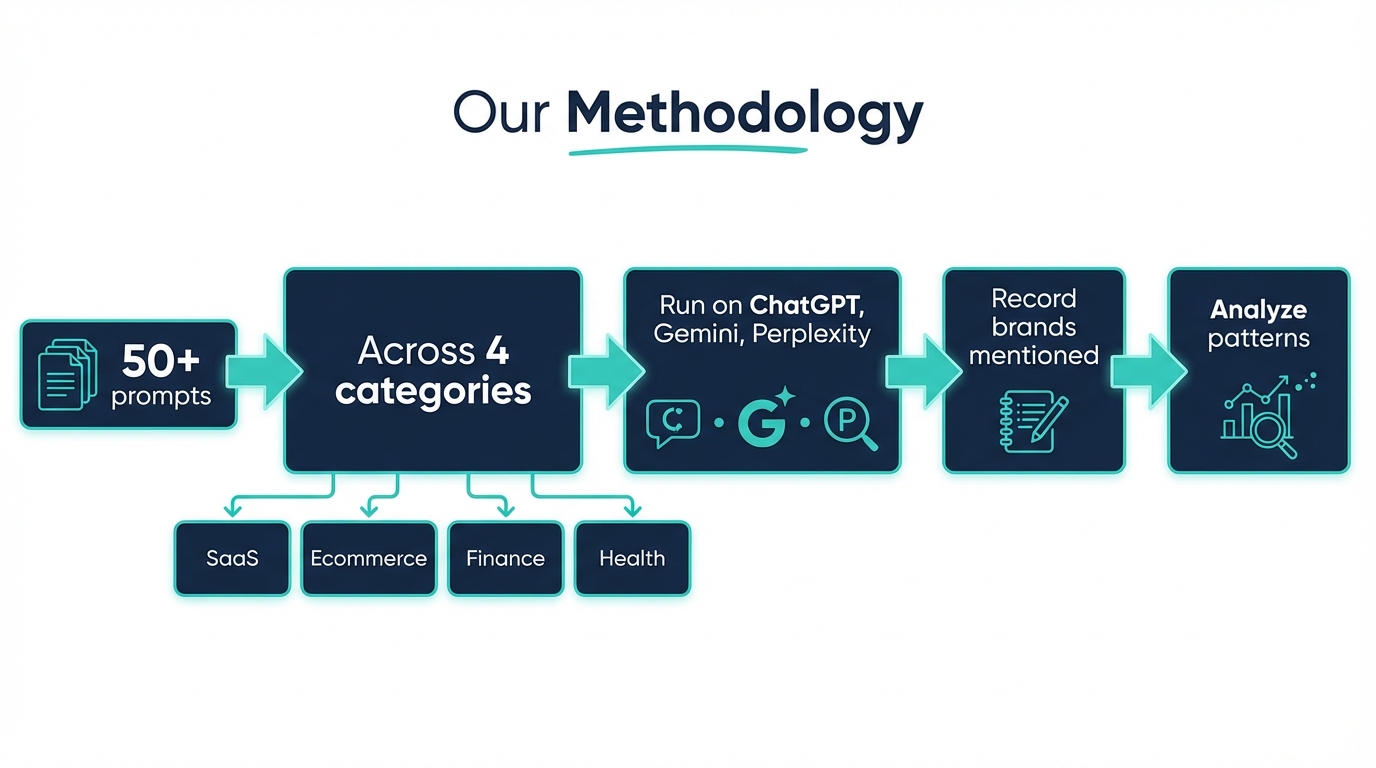

We wanted to move past speculation. Instead of guessing which brands ChatGPT favors, we ran a structured study across four major categories and documented every recommendation. The results reveal clear, repeatable patterns that any brand can act on.

Our Methodology

We queried ChatGPT (GPT-4o) with 50+ prompts across four categories: SaaS/Productivity, Ecommerce, Finance, and Health/Wellness. Each category received 12-15 unique prompts, phrased the way real users ask questions.

Prompts ranged from broad ("What's the best project management tool?") to specific ("Which CRM is best for a 20-person sales team?"). We also tested comparison prompts ("Slack vs. Teams vs. Discord for remote work") and recommendation prompts ("What do you recommend for email marketing?").

Every response was logged with the exact brands mentioned, their position in the response (first mention carries weight), and whether ChatGPT framed them positively, neutrally, or with caveats. We ran each prompt three times on different days to check for consistency.

A few ground rules: we used default ChatGPT settings with no custom instructions, no plugins, and no browsing mode. This gave us the purest view of what the model's training data produces without external influence.

Category-by-Category Findings

SaaS and Productivity

SaaS brands dominated in terms of recommendation consistency. The same names appeared across multiple prompt variations with little fluctuation between runs.

| Prompt | Top Brands Mentioned | Notes |

|---|---|---|

| "Best project management tool" | Asana, Monday.com, Trello, Jira, ClickUp | Asana appeared first in 2 of 3 runs |

| "Best CRM for small business" | HubSpot, Salesforce, Zoho CRM, Pipedrive | HubSpot recommended first every time |

| "Best email marketing platform" | Mailchimp, ConvertKit, ActiveCampaign, Constant Contact | Mailchimp appeared in every response |

| "Best team communication tool" | Slack, Microsoft Teams, Discord, Zoom | Slack led in all 3 runs |

HubSpot and Mailchimp stood out for consistency. Both appeared as the first recommendation in every single prompt variation we tested. Newer tools like Notion and Linear appeared only when prompts were highly specific (e.g., "best tool for engineering teams").

Ecommerce

Ecommerce recommendations were more fragmented. ChatGPT frequently hedged its answers and offered more caveats than in other categories.

| Prompt | Top Brands Mentioned | Notes |

|---|---|---|

| "Best ecommerce platform" | Shopify, WooCommerce, BigCommerce, Squarespace | Shopify appeared first in all runs |

| "Best online payment processor" | Stripe, PayPal, Square | Stripe and PayPal alternated for first position |

| "Best dropshipping platform" | Shopify, Oberlo, Spocket, DSers | Shopify dominated despite Oberlo being deprecated |

| "Best product review tool" | Yotpo, Judge.me, Trustpilot, Bazaarvoice | More variation between runs |

Shopify's dominance was striking. It appeared in every ecommerce-adjacent prompt, even ones not directly about platform selection. This suggests deep entity embedding in ChatGPT's training data, likely driven by the sheer volume of Shopify-related content across the web.

One notable finding: ChatGPT recommended Oberlo in two of three dropshipping prompt runs, despite Oberlo shutting down in June 2022. This highlights a real limitation of LLM recommendations: training data cutoffs create stale suggestions.

Finance

Finance prompts triggered the most cautious responses. ChatGPT added disclaimers to nearly every recommendation, and the phrasing shifted from "I recommend" to "popular options include."

| Prompt | Top Brands Mentioned | Notes |

|---|---|---|

| "Best investing app for beginners" | Robinhood, Fidelity, Charles Schwab, Vanguard | Fidelity and Schwab appeared together consistently |

| "Best business bank account" | Mercury, Bluevine, Chase, Relay | Mercury led for startup-focused prompts |

| "Best accounting software" | QuickBooks, Xero, FreshBooks, Wave | QuickBooks appeared first in every run |

| "Best budgeting app" | YNAB, Mint, PocketGuard, Goodbudget | YNAB and Mint alternated |

Established financial institutions (Fidelity, Schwab, Vanguard) appeared far more consistently than fintech startups. The exception was Mercury, which showed up reliably for startup-focused finance prompts. This tracks with Mercury's heavy presence in startup media and editorial coverage from TechCrunch and Y Combinator's directory.

ChatGPT also recommended Mint in two of three budgeting runs, despite Intuit shutting Mint down in January 2024. Another training data artifact.

Health and Wellness

Health recommendations were the most variable across runs. ChatGPT frequently changed which brands it mentioned first, and it added more qualifiers than any other category.

| Prompt | Top Brands Mentioned | Notes |

|---|---|---|

| "Best meditation app" | Headspace, Calm, Insight Timer, Ten Percent Happier | Headspace and Calm split first position |

| "Best fitness tracker" | Apple Watch, Fitbit, Garmin, Whoop | Apple Watch led consistently |

| "Best meal delivery service" | HelloFresh, Blue Apron, Factor, Home Chef | Most variation between runs |

| "Best telehealth platform" | Teladoc, MDLive, Amwell, PlushCare | High variation across runs |

Apple Watch and Headspace were the standout performers. Apple Watch appeared first in every wearable-related prompt, reflecting Apple's dominant entity presence across news, reviews, and technical documentation. Headspace and Calm split the meditation category almost evenly, consistent with their heavy PR and editorial footprint.

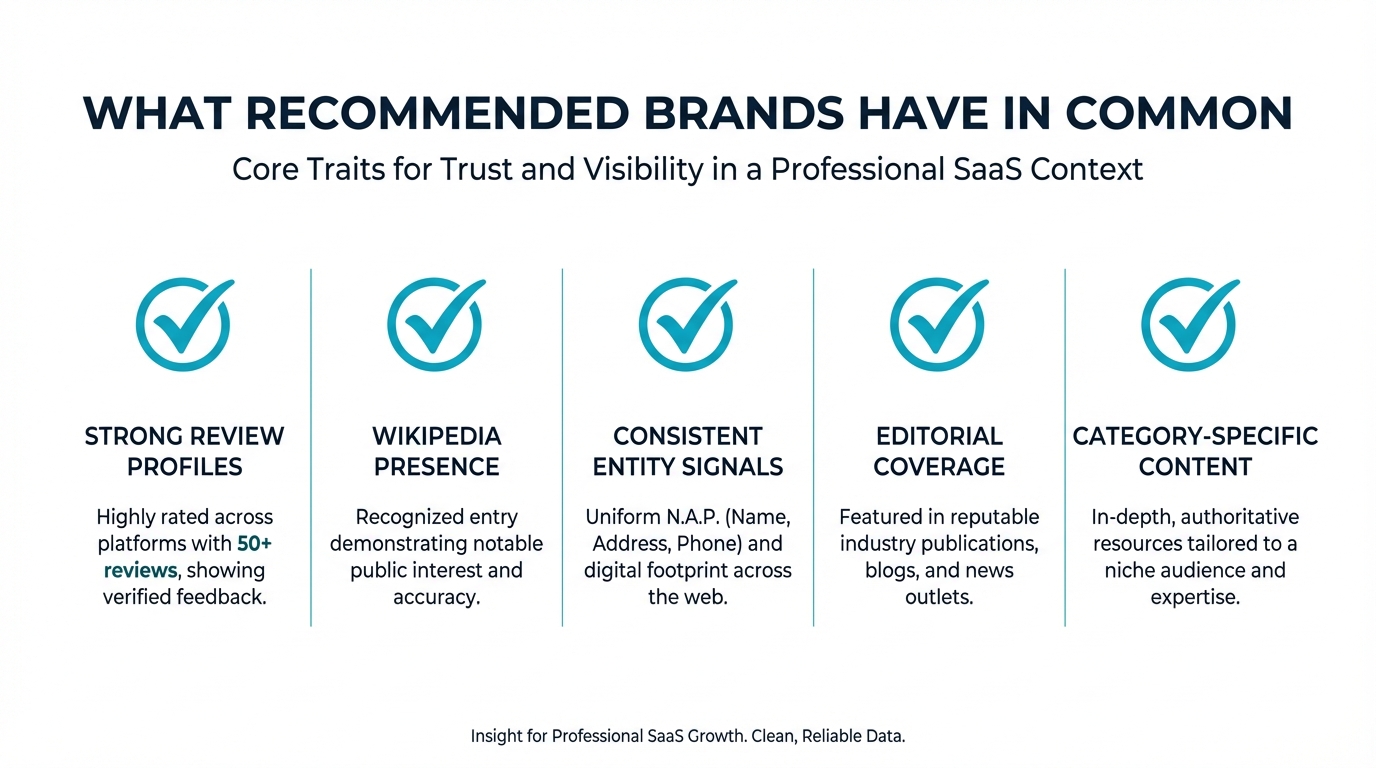

What Do Recommended Brands Have in Common?

After cataloging hundreds of individual recommendations, five patterns emerged consistently.

Strong Review Profiles

Brands that ChatGPT recommended most consistently had extensive review coverage on platforms like G2, Capterra, and Trustpilot. HubSpot has over 10,000 reviews on G2 alone. Mailchimp, Shopify, and QuickBooks show similar depth.

This matters because review sites are heavily represented in LLM training data. Thousands of review pages create repeated entity associations between a brand name and positive product attributes.

Wikipedia and Wikidata Presence

Every brand that appeared in the top position across our tests had a Wikipedia article. This isn't a coincidence. Wikipedia is one of the highest-quality data sources in LLM training sets, and research from the Allen Institute for AI has shown that Wikipedia text carries disproportionate weight in language model outputs.

Brands without Wikipedia pages appeared sporadically or not at all, even when they had strong market positions.

Consistent Entity Signals

Top-recommended brands maintain consistent naming, descriptions, and categorization across their web presence. HubSpot is always "HubSpot" (not "Hubspot" or "Hub Spot"). Shopify consistently describes itself with the same core terms across every channel.

This consistency helps LLMs build strong entity representations. When a brand's identity is fragmented across different names, descriptions, and categories, the model's internal representation becomes weaker.

Deep Editorial Coverage

Brands with extensive coverage from authoritative publications (TechCrunch, Forbes, industry-specific outlets) appeared more reliably. This goes beyond having a few articles. The brands ChatGPT recommended most had hundreds or thousands of editorial mentions spanning several years.

Fresh coverage matters less than cumulative coverage. A brand with steady editorial presence since 2018 outperformed a brand with a viral moment in 2023 but limited coverage before that.

Community Discussion Volume

Brands heavily discussed on Reddit, Stack Overflow, Quora, and similar platforms showed up more frequently. These platforms contribute substantially to LLM training data, and the conversational format (real users recommending products to other users) closely matches the recommendation context that ChatGPT operates in.

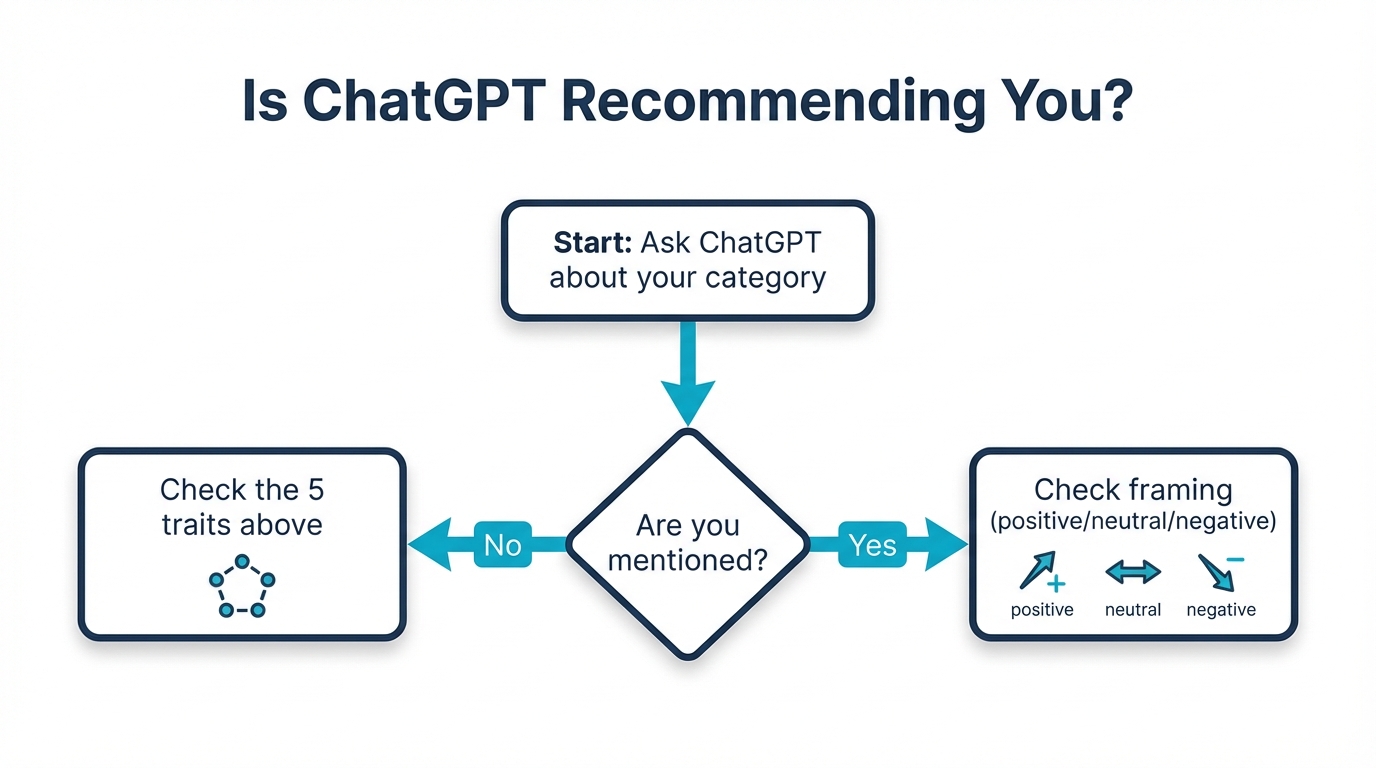

How to Check If Your Brand Is Recommended

Start with manual testing. Open ChatGPT and run these prompt templates with your brand's category:

- "What's the best [your category] tool/product/service?"

- "What do you recommend for [problem your brand solves]?"

- "[Your brand] vs [top competitor] vs [another competitor]"

- "Best [your category] for [your target audience]?"

Run each prompt at least three times on different days. Log which brands appear, their position, and whether your brand is mentioned. Pay attention to framing: does ChatGPT describe your brand positively, or does it add caveats?

This manual process works for a snapshot, but it doesn't scale. AI models update their responses over time, competitors shift, and new brands enter the conversation. You need ongoing monitoring.

friction AI automates this entire process. It runs prompts across ChatGPT, Perplexity, Gemini, and Claude, tracking your brand's recommendation rate, sentiment, and competitive positioning over time. Instead of manual spot-checks, you get a continuous dashboard showing exactly where your brand stands in AI-generated answers.

The manual approach tells you where you are today. Automated monitoring with friction AI tells you whether you're trending up or down, and how every competitive move affects your position.

What to Do If Your Brand Isn't Recommended

If your brand didn't show up in your testing, focus on the patterns above. Build your review profile on G2, Capterra, and industry-specific review platforms. Pursue a Wikipedia article if you qualify under their notability guidelines. Standardize your brand entity signals across every web property. Invest in earning editorial coverage from authoritative publications.

These aren't quick fixes. LLM training data reflects years of accumulated web presence. But every brand that dominated our test results built its position through these same channels. The playbook is clear. The question is how quickly you execute it.