AI share of voice measures the percentage of AI-generated answers where your brand is mentioned compared to your competitors. Unlike traditional share of voice, which tracks advertising impressions or media mentions, AI share of voice captures how often AI platforms like ChatGPT, Perplexity, and Gemini recommend or reference your brand when users ask questions in your category.

What Is AI Share of Voice?

When someone asks an AI assistant "What's the best project management tool?", the response typically mentions three to six brands. Your AI share of voice is your slice of those mentions relative to your competitive set.

This metric matters because AI platforms are becoming a primary discovery channel. A 2024 study from Gartner projected that by 2026, traditional search traffic would drop by 25% as AI-powered search grows. Every brand excluded from AI recommendations loses access to a fast-growing audience.

AI share of voice isn't about vanity metrics. It's a direct proxy for how much of the AI-driven discovery funnel your brand captures. If three competitors are mentioned in every ChatGPT answer and you're not, that's revenue walking to someone else.

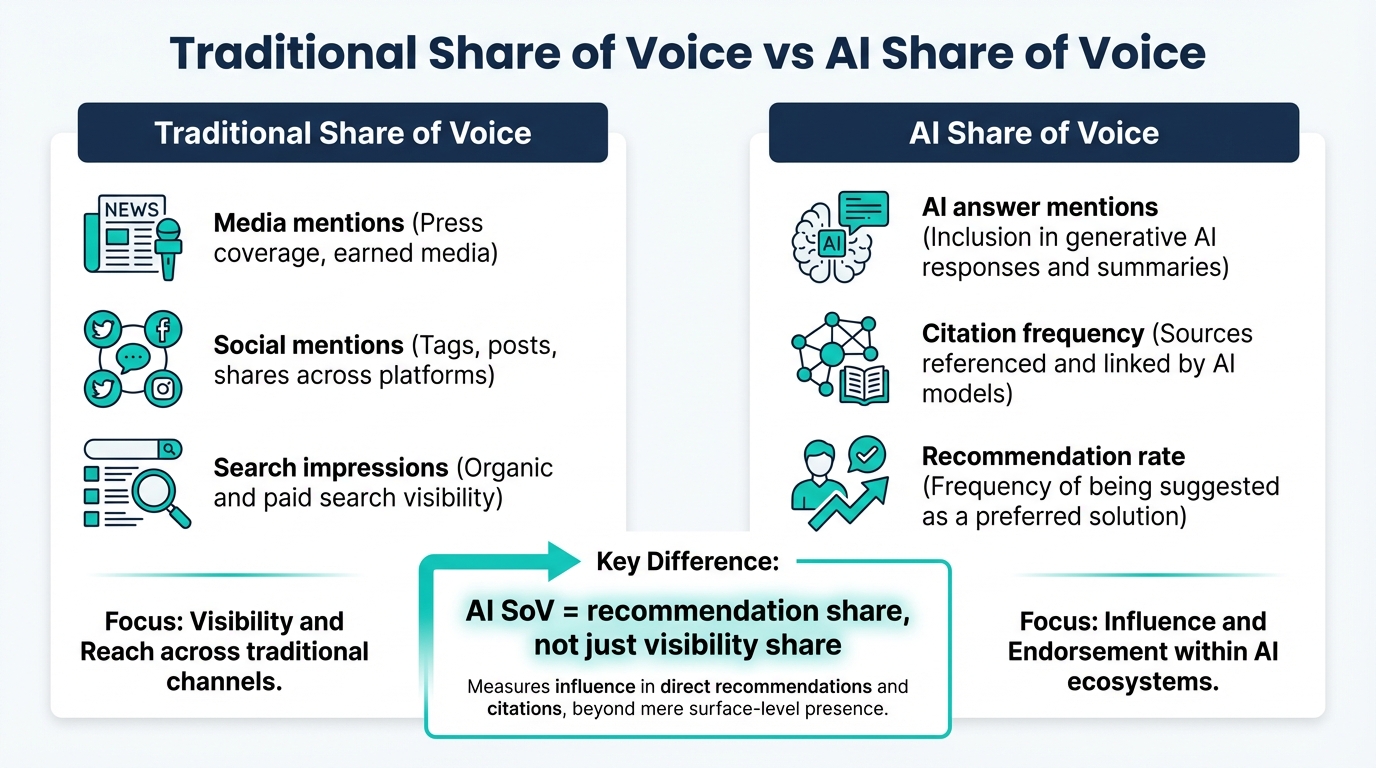

How AI Share of Voice Differs From Traditional Share of Voice

Traditional share of voice (SOV) and AI share of voice (AI SOV) measure fundamentally different things. Here's where they diverge.

| Dimension | Traditional SOV | AI Share of Voice |

|---|---|---|

| What it measures | Ad impressions, media mentions, search visibility | Brand mentions in AI-generated answers |

| Data source | Ad platforms, media monitoring, SEO tools | AI platform outputs (ChatGPT, Perplexity, Gemini, etc.) |

| User intent | Passive exposure (ads, articles) | Active decision-making (asking AI for advice) |

| Controllability | Direct: buy more ads, publish more content | Indirect: improve entity signals, reviews, coverage |

| Update frequency | Real-time or daily | Varies by model update cycle |

| Competitive dynamics | More spend = more share | More authority signals = more share |

| Measurement tools | Semrush, Brandwatch, Meltwater | friction AI, manual prompt testing |

The biggest difference is controllability. With traditional SOV, you can increase your share by increasing your ad budget. AI share of voice doesn't work that way. You can't pay ChatGPT to mention your brand. You earn AI mentions through entity authority, editorial coverage, review depth, and consistent web presence.

This makes AI SOV stickier than traditional SOV. Once you've built strong entity signals, your share tends to be more durable. But it also means that falling behind is harder to reverse quickly.

How to Calculate AI Share of Voice

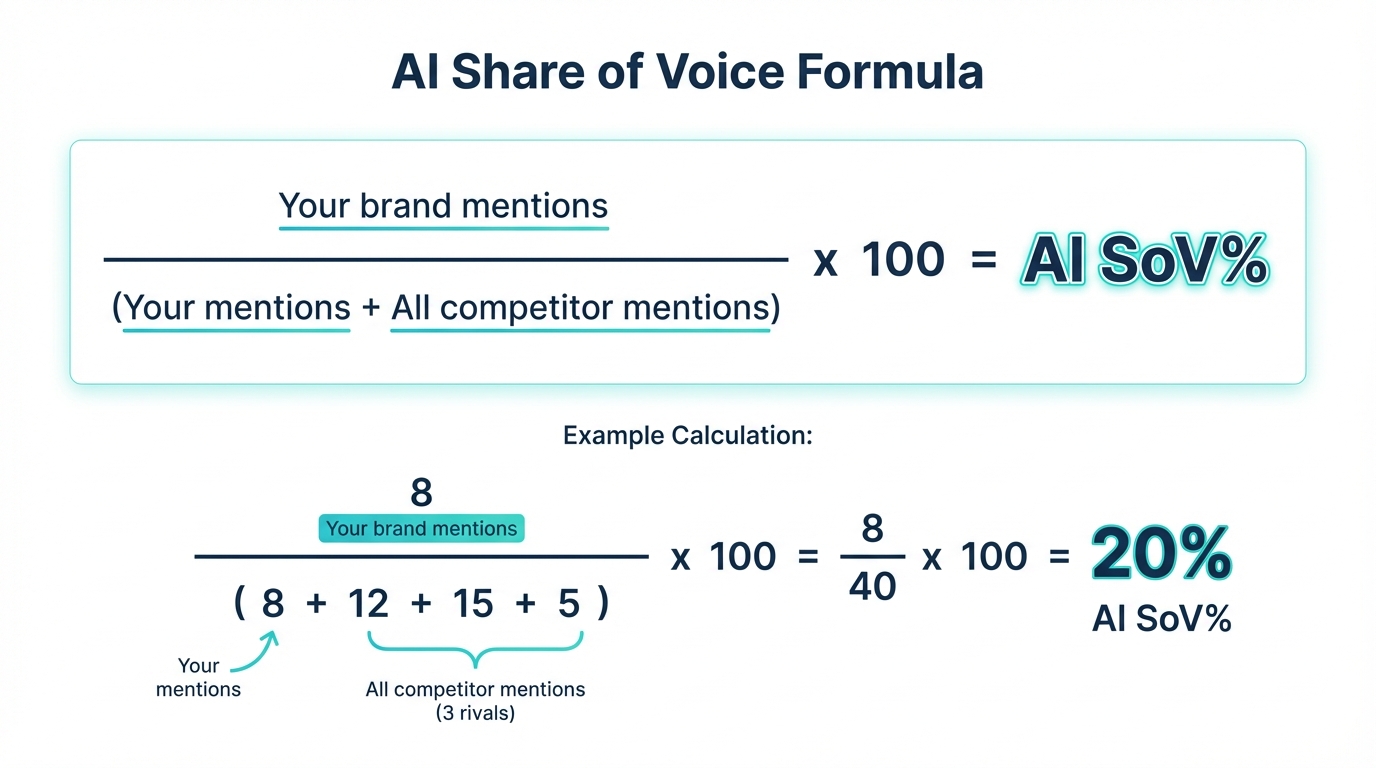

The core formula is straightforward:

AI Share of Voice = (Your Brand Mentions / Total Brand Mentions Across Competitive Set) x 100

Here's how to apply it step by step.

Step 1: Define Your Competitive Set

Pick 4-8 direct competitors. These should be brands that a user would realistically compare against yours. Don't include aspirational competitors that serve a different market segment.

For example, if you sell mid-market CRM software, your set might include HubSpot, Pipedrive, Zoho CRM, Freshsales, and Close. You probably wouldn't include Salesforce Enterprise unless your customers genuinely cross-shop against it.

Step 2: Build Your Prompt Library

Create 15-25 prompts that represent how your target audience asks for recommendations. Mix these types:

- Category prompts: "Best [category] tool"

- Use case prompts: "Best tool for [specific problem]"

- Audience prompts: "Best [category] for [audience type]"

- Comparison prompts: "[Brand A] vs [Brand B] for [use case]"

Step 3: Run Prompts Across Platforms

Execute each prompt across your target AI platforms. Run each prompt at least three times to account for response variation. Log every brand mention, its position in the response, and the sentiment framing.

Step 4: Calculate Per-Platform and Aggregate SOV

Count total mentions for each brand across all prompts. Divide your brand's mentions by the total.

Example: You test 20 prompts on ChatGPT. Across all responses, there are 85 total brand mentions. Your brand appears 12 times.

Your AI SOV on ChatGPT = (12 / 85) x 100 = 14.1%

Repeat for each platform, then calculate a weighted aggregate based on platform importance to your audience.

Step 5: Track Over Time

Run this process monthly at minimum. AI outputs change as models are updated, competitors optimize their presence, and new content enters training data. A single measurement is a snapshot. Monthly tracking reveals trends.

Platform-by-Platform Tracking

Each AI platform has distinct characteristics that affect how they surface brands.

ChatGPT

ChatGPT's recommendations are heavily influenced by its training data, which includes web content through its knowledge cutoff. Brands with deep historical web presence (Wikipedia articles, years of editorial coverage, extensive review profiles) tend to dominate.

Response variation is moderate. The same prompt often returns similar brands across runs, though the order and framing can shift. ChatGPT typically mentions 3-5 brands per recommendation prompt.

Perplexity

Perplexity pulls from live web sources and cites them inline. This makes it more responsive to recent content than ChatGPT. Brands with fresh, authoritative content have an advantage here.

Perplexity also shows its sources, which means you can trace exactly why a brand was mentioned. This makes it the most transparent platform for understanding AI share of voice drivers. Perplexity's documentation confirms that source recency and authority both factor into response generation.

Gemini

Google's Gemini draws on Google's search index, which gives it a unique data advantage. Brands that perform well in traditional Google search tend to carry that advantage into Gemini responses.

Gemini's responses tend to be longer and include more brands per answer than ChatGPT. This means the total mention pool is larger, which can dilute individual brand share of voice percentages.

Google AI Overviews

AI Overviews appear directly in Google search results and pull from indexed web pages. They function differently from conversational AI, showing summarized answers with source citations rather than freeform recommendations.

Tracking AI Overview mentions requires monitoring Google search results for your target queries. Google's Search Central documentation provides guidance on how content is selected for these features.

Competitive Benchmarking Framework

Set up your competitive benchmarking in three phases.

Phase 1: Baseline (Week 1)

Run your full prompt library across all platforms. Calculate SOV for every competitor. This becomes your baseline.

Document the specific prompts where you're absent but competitors appear. These represent your biggest gaps and your clearest optimization targets.

Phase 2: Gap Analysis (Week 2)

For every prompt where competitors appear and you don't, investigate why. Check whether the competitor has stronger review presence on G2 or Capterra. Look at their Wikipedia coverage. Examine their editorial footprint.

Rank your gaps by business impact: which prompts represent the highest-value user intent? Prioritize those for optimization.

Phase 3: Ongoing Monitoring (Monthly)

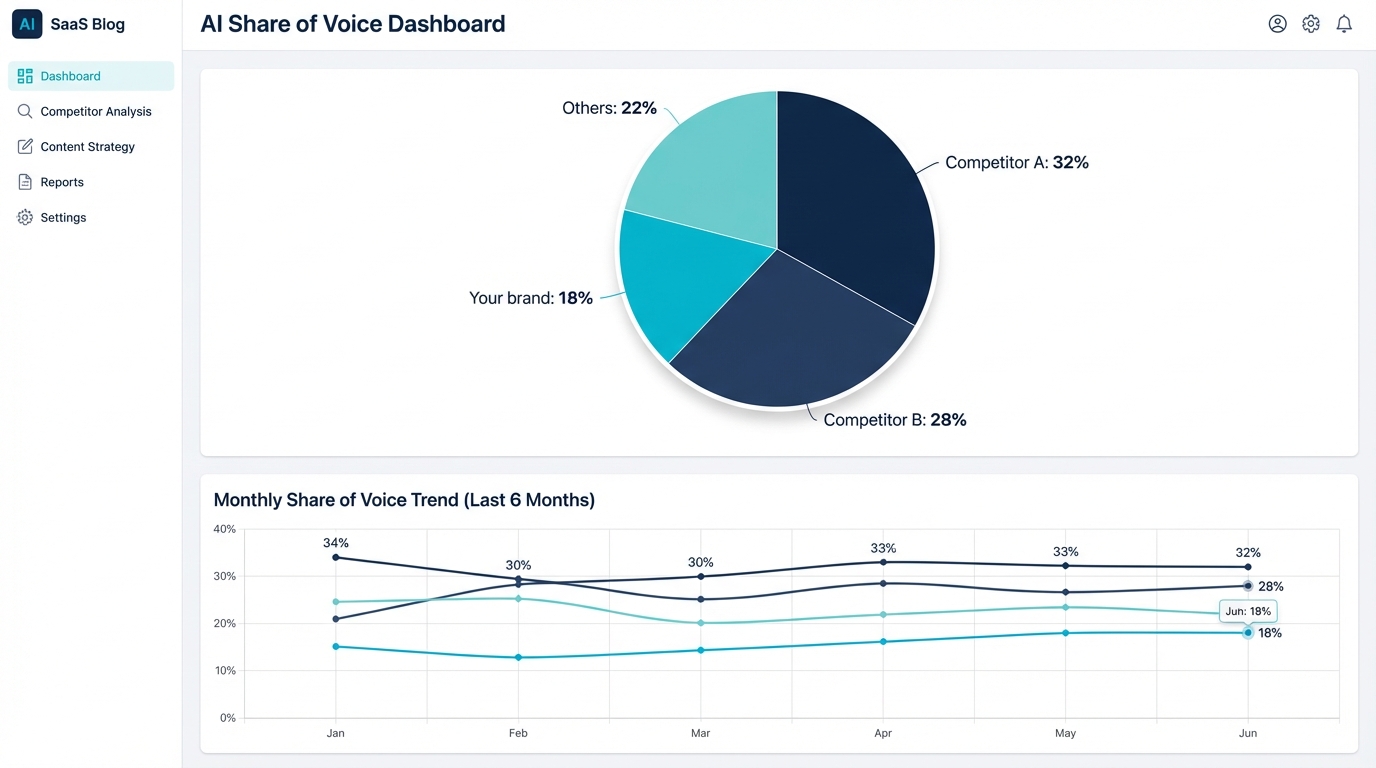

Re-run your prompt library monthly. Track SOV changes for your brand and every competitor. Flag any competitor whose share is growing quickly, as that signals they're actively optimizing for AI visibility.

Build a simple dashboard showing SOV trend lines per platform and per competitor. This becomes your early warning system for competitive shifts in the AI channel.

Tools for AI Share of Voice Tracking

Manual tracking works for an initial baseline but breaks down quickly. Running 20+ prompts across 4 platforms, three times each, every month, is tedious and error-prone.

friction AI automates the entire process. It runs your prompt library across ChatGPT, Perplexity, Gemini, and Claude on a scheduled basis and calculates your AI share of voice automatically. The competitive intelligence feature shows your SOV alongside every competitor, with trend data that reveals who's gaining and who's losing ground.

The platform also catches things manual testing misses. AI responses vary between sessions, and friction AI's continuous monitoring captures the full distribution of mentions rather than a single snapshot. You get statistical confidence in your numbers instead of anecdotal evidence from a handful of test runs.

For teams serious about AI as a discovery channel, automated tracking isn't optional. The brands winning AI share of voice right now are the ones measuring it systematically.

Frequently Asked Questions

How is AI share of voice calculated?

Divide the number of AI answers where your brand appears by the total number of answers across your tracked prompt set. For a set of 50 category queries run across ChatGPT, Claude, Gemini, and Perplexity (200 total answers), if your brand appears in 40, your SoV is 20%. Track this monthly using the same prompt set for apples-to-apples comparison.

What's a good AI share of voice benchmark?

Benchmarks vary by category. In saturated B2B SaaS categories, 15-25% SoV is strong. In emerging categories, a dominant player may hold 40-60%. For most established brands in competitive categories, expect 10-20% on category-level queries, with higher SoV on branded queries and lower SoV on comparison queries.

How does AI share of voice differ from traditional share of voice?

Traditional SoV measures media mentions or social volume. AI SoV measures AI recommendation frequency, which is a filtered output reflecting AI model preferences. A brand with high traditional SoV can have low AI SoV if its mentions don't meet AI quality thresholds (authoritative sources, structured content, recency). AI SoV rewards signal quality, not raw volume.

Should I measure AI share of voice per platform or combined?

Both. Per-platform SoV reveals platform-specific gaps (for example, strong on ChatGPT but weak on Perplexity). Combined SoV gives the aggregate picture. Per-platform is more actionable for targeting specific fixes. Combined is better for executive reporting. Most teams track both.

What's the difference between mention rate and share of voice?

Mention rate is the percentage of answers where your brand appears at all, including passing mentions. Share of voice measures relative presence against competitors in the same answers. A brand can have 40% mention rate but only 15% SoV if competitors co-appear in most of those mentions. SoV is the competitive metric; mention rate is the visibility metric.

How do I improve my AI share of voice?

Three levers. First, increase citation eligibility: publish structured, data-dense content that meets AI retrieval criteria. Second, build authority on the specific platforms where SoV is lowest (Perplexity-weak brands often need SEO fundamentals; ChatGPT-weak brands often need brand recognition signals). Third, reduce competitor SoV indirectly by owning specific comparison and use-case queries competitors currently dominate.